Graphene Violated a 172-Year Physics Law by 200x — and the Invoice Is Finally Due

Summary

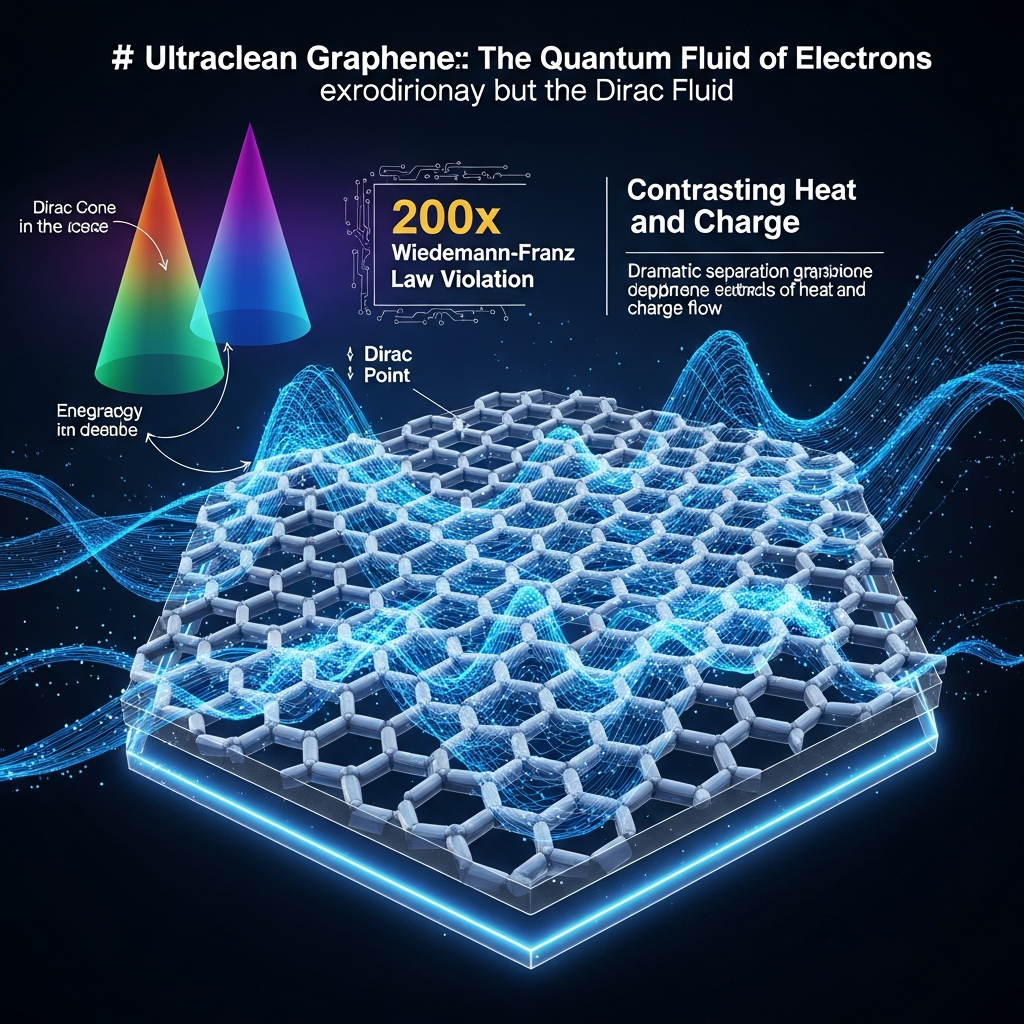

Researchers from India's Indian Institute of Science (IISc) and Japan's National Institute for Materials Science (NIMS) have published findings in Nature Physics confirming that electrons in ultraclean graphene behave not as individual particles but as a collective quantum fluid — a "Dirac fluid" — in which the 172-year-old Wiedemann-Franz law governing the ratio between thermal and electrical conductivity is violated by a factor exceeding 200. This result extends the landmark 2016 Harvard observation of a roughly tenfold violation by another order of magnitude, with the decisive advancement attributable to the unprecedented purity of hexagonal boron nitride (hBN) crystals produced by NIMS researchers Watanabe and Taniguchi, which shielded graphene from impurity scattering and enabled genuine collective electron flow. Remarkably, the mathematical equations governing this Dirac fluid are identical to those describing the quark-gluon plasma momentarily produced at CERN at temperatures exceeding one trillion degrees — a demonstration of deep physical universality bridging 14 orders of magnitude in temperature. On the applied side, the phenomenon provides a theoretical foundation for next-generation quantum sensors capable of detecting ultraweakly magnetic fields without the liquid-helium cooling requirements of current SQUID systems, addressing a market projected to expand from roughly $479 million in 2026 to as much as $60 billion by 2040. Structurally, this discovery represents a clear data point in the accelerating shift of fundamental-science leadership toward Asia, as India now ranks third globally in research paper output, IISc claims the world's top citation-per-paper index in QS 2026 rankings, and India's science and technology budget surged 57% year-over-year in fiscal 2025-26 — a combination signaling that the era of exclusively Western-led physics breakthroughs may be drawing to a close.

Key Points

The Scientific Reality Behind a 200x Physics Law Violation

The Wiedemann-Franz (WF) law, established in 1853, holds that in metals the ratio of thermal conductivity to electrical conductivity scales proportionally with temperature — a relationship that held with extraordinary precision across 172 years of engineering and materials science. The fact that this ratio is violated in graphene by a factor exceeding 200 is not a minor anomaly; it signals the existence of a completely different physical regime where electrons stop behaving as independent particles and begin acting as a collective quantum fluid. The IISc research team observed that near graphene's charge neutrality point, as electrical conductivity climbed, thermal conductivity actually fell — an inverse relationship that semiclassical theory predicts cannot exist, yet was measured at a magnitude 200 times larger than theoretical expectations. Compared to the 2016 Harvard result in Science, which documented a roughly tenfold violation, the IISc-NIMS result represents a qualitative leap rather than a quantitative extension — the key advance was the unprecedented purity of hBN crystals from NIMS, which reduced impurity scattering enough to allow true collective electron behavior to dominate. This discovery forces a philosophical recalibration: the WF law has not been broken in the sense of being wrong, but demoted from universal principle to accurate approximation valid for conventional Fermi liquid metals, which is precisely how Newtonian mechanics was demoted — not destroyed but encompassed — when relativity arrived. The broader message is that every physics law carries an implicit set of conditions, and graphene has now made visible a boundary that most physicists assumed lay much further away.

The Dirac Fluid — When Electrons Stop Being Particles and Start Being Water

Graphene's charge neutrality point is where the extraordinary happens: electrons, which in ordinary metals move independently as a Fermi liquid of separate particles, abruptly shift into a collective Dirac fluid in which they flow like water, sharing momentum and energy as a continuous medium. This transition had been theoretically predicted but experimentally confirmed only at the level that the IISc-NIMS experiment now establishes: the Dirac fluid's viscosity was measured to approach within a factor of four of the KSS bound — the theoretical minimum viscosity that quantum mechanics' uncertainty principle permits for any fluid. Prior work published in Physical Review Letters had placed graphene alongside quark-gluon plasma and cold atomic gases in the category of nearly perfect liquids, but the IISc result anchors this identification with the most precise measurement yet achieved in any condensed-matter system. The transition from Fermi liquid to Dirac fluid is not merely a curiosity — it demands a new theoretical framework for condensed-matter physics, because the standard tools used to describe ordinary metals simply do not apply. Professor Arindam Ghosh's remark that it is surprising that even after 20 years, we can do so many things with just one layer of graphene captures precisely this sense of a material that continues to generate genuinely new physics rather than elaborating on already-understood phenomena.

Tabletop Cosmology — How Graphene Shares Math With CERN's Quark-Gluon Plasma

The most counterintuitive result in the IISc-NIMS paper is the mathematical bridge it confirms between graphene at negative 213 degrees Celsius and the quark-gluon plasma created at CERN at temperatures exceeding one trillion degrees. According to a detailed ArXiv analysis, both systems are governed by the same Lorenz ratio formula, with the ratio determined by the enthalpy-to-net-charge-carrier density — differing only in the dimensionality of their density of states (linear in 2D graphene, quadratic in 3D quark-gluon plasma). This means a university laboratory in Bengaluru is producing, in a tabletop experiment, a mathematical analogue of the physical state that CERN's 22-nation, $1.4-billion-per-year facility creates for microseconds at a time. The implications for research economics are substantial: if condensed-matter experiments can serve as proxies for high-energy physics phenomena, hundreds of laboratories worldwide can contribute to questions in black hole thermodynamics, quantum criticality, and strong-coupling physics that previously required particle accelerator access. Of course, graphene cannot replicate all aspects of quark-gluon plasma — particle creation and annihilation are not accessible in a 2D carbon sheet — but the shared transport physics is sufficient to illuminate fundamental questions about universal behavior at quantum critical points. The universality this reveals — that nature uses the same mathematical language across 14 orders of magnitude in temperature — is itself one of the deepest unsolved mysteries in fundamental physics.

The Structural Rise of Global South Basic Science and the Asian Collaboration Model

This discovery carries geopolitical significance that rivals its scientific significance. The first author, Aniket Majumdar, is an IISc PhD student; the corresponding author, Professor Arindam Ghosh, is an IISc faculty member; the critical enabling materials came from NIMS in Japan. An Asian collaboration, with no Western institution in a leading role, has produced a result that shakes a 124-year-old foundation of Western physics. India's research output grew nearly sixfold from 34,000 papers in 2010 to 195,000 in 2024, reaching third globally, while IISc achieved a perfect score on the QS 2026 citation-per-paper index. India's science budget surged 57% in fiscal 2025-26, with 20,000 crore rupees allocated to a new R&D Innovation program. During the Scopus 2020-2025 measurement period, India ranked first globally in paper volume. The IISc-NIMS partnership is structurally elegant: India contributed theoretical expertise and experimental design in a tradition stretching from Ramanujan to Chandrasekhar, while Japan contributed the world's best materials through NIMS — each country's comparative advantage fitting the other's gap precisely. This bilateral-comparative-advantage model could prove more efficient than the CERN model of pooling many nations' budgets into a single shared infrastructure, and its success here makes it a benchmark for future Asia-based basic science partnerships.

The Quantum Sensor Application Pathway and Its Market Potential

IISc's official press release explicitly identifies the Dirac fluid's properties as the basis for next-generation quantum sensors capable of amplifying extremely weak electrical signals and detecting faint magnetic fields with unprecedented sensitivity. The current standard for high-sensitivity magnetic field detection in medicine — SQUID sensors used in magnetoencephalography (MEG) and magnetocardiography (MCG) — requires liquid-helium cooling to temperatures near absolute zero, creating cost and portability barriers that prevent widespread clinical deployment. A graphene-based sensor capable of femtotesla sensitivity at room temperature would eliminate those barriers, enabling wearable brain-activity monitors, portable geological survey tools, and defense applications including submarine magnetic-anomaly detection from extended standoff distances. McKinsey projects the quantum sensor market at $700 million to $1 billion by 2030 and potentially up to $10 billion by 2035; broader estimates extend to $60 billion by 2040 across all quantum sensing modalities. The competitive landscape includes diamond NV centers — which QuantumDiamonds has already commercialized for semiconductor inspection — and SQUIDs entrenched in medical imaging, meaning graphene sensors face established competition even as their theoretical properties exceed what either competitor offers on paper. The realistic commercial timeline is 8-12 years, but the theoretical foundation has now been experimentally established, which is the prerequisite step that makes everything else possible.

Positive & Negative Analysis

Positive Aspects

- A Real Physical Foundation for the Quantum Sensor Technology Leap

The IISc-NIMS experiment does not merely predict that graphene could make better sensors — it provides experimental confirmation that the underlying physics of the Dirac fluid enables the precise behaviors needed: strong signal amplification of weak electrical signals and sensitive detection of faint magnetic fields. This distinction between theoretical hope and experimentally confirmed physical principle is crucial for attracting the research investment and engineering attention that moves technology toward commercial reality. The current gold standard for sensitive biomagnetic measurement — SQUID-based MEG systems costing millions of dollars per installation and requiring annual liquid-helium maintenance budgets of tens of thousands of dollars — represents exactly the kind of technology barrier that graphene Dirac fluid sensors could circumvent. McKinsey's identification of quantum biosensors as candidates for wearable commercialization by 2030, specifically noting that cryogenic cooling may not be required, aligns precisely with the physical properties the IISc result confirms. The quantum sensor market's projection from $479 million in 2026 toward $60 billion by 2040 provides the economic incentive structure that makes sustained engineering investment rational, and graphene's unique combination of sensitivity, flexibility, and room-temperature operation creates a differentiated competitive position that diamond NV centers and SQUIDs cannot replicate.

- Tabletop Cosmology as a Revolutionary and Democratizing Research Paradigm

The mathematical equivalence between graphene's Dirac fluid and CERN's quark-gluon plasma transport physics means that condensed-matter laboratories can now address questions in fundamental cosmology and particle theory that previously required particle accelerator access — a shift with profound implications for how basic physics research is conducted and who can participate. CERN requires 22 nations' combined budgets and maintains access for a limited number of affiliated institutions, creating an inherent bottleneck in who can contribute to high-energy physics questions. Graphene experiments are accessible to any well-equipped condensed-matter laboratory, meaning hundreds of institutions worldwide could contribute to questions about black hole thermodynamics, quantum criticality, and strong-coupling physics. The ability to study phenomena stably over extended measurement windows — unlike CERN's microsecond quark-gluon plasma bursts — provides experimental advantages that could yield insights unavailable from accelerator-based methods. This is a methodological revolution comparable in significance to Galileo's use of the telescope: a new instrument that democratizes access to a previously restricted domain of inquiry, with a corresponding acceleration in the pace of discovery.

- Validation of the Asian Bilateral Comparative-Advantage Collaboration Model

The IISc-NIMS partnership demonstrates with a landmark result that world-leading basic science does not require the infrastructure of CERN, the budget of the NIH, or the institutional prestige of Harvard or MIT — it requires precisely matched comparative advantages from two committed institutions. India contributed theoretical depth and experimental capability in a research tradition with roots stretching centuries; Japan contributed the world's most advanced materials through NIMS, which holds a near-monopoly on the highest-purity hBN crystals available globally. The combination produced a Nature Physics paper that advances the field by a factor of 20 over the previous state of the art. India's DST budget surge of 57% in fiscal 2025-26 provides the financial foundation for sustaining and expanding this model, and IISc's perfect QS citation-per-paper score demonstrates that elite output quality is already established at the institutional level. If India-Korea, India-Taiwan, and India-Singapore pairings in basic science research replicate this structural model — theory and measurement capability from India matched with precision materials or instrumentation from partner countries — the Asian science ecosystem could develop a multi-node network of bilateral partnerships that competes effectively with the Western multi-national consortium model.

- Definitive Confirmation of Graphene's Long-Term Research Platform Value

The discovery of the Dirac fluid in 2025, twenty-one years after graphene's initial isolation, is the strongest possible refutation of the criticism that graphene is a promise material that never delivers. Most materials science hot topics peak in discovery intensity within the first decade of study, as the obvious physical properties are catalogued and research interest migrates elsewhere. Graphene has followed the opposite trajectory: its most fundamental surprises — magic-angle superconductivity in 2018, Dirac fluid in 2025 — have arrived in the second decade and beyond. The Dirac cone band structure that gives graphene's unusual electronic character continues to reveal new emergent phenomena at physical regimes that were not accessible until sample quality improved sufficiently. For research funders and policymakers, this establishes a compelling precedent: basic science investment in a material or phenomenon can yield foundational discoveries on timescales of twenty years or more, and the value of sustained investment without requiring near-term commercial milestones is not abstract idealism but documented historical fact. Professor Ghosh's own surprise at what one layer of carbon can still produce twenty years in is the most credible endorsement possible for continued long-term investment.

- Cost Democratization of Fundamental Physics Research

The CERN model of fundamental physics research is inherently exclusionary: it requires a 22-nation consortium, a multi-billion-dollar annual operating budget, and a physical facility that concentrates access geographically and institutionally. The tabletop graphene experiment demonstrated here requires a well-equipped condensed-matter laboratory, access to high-quality hBN crystals, and the scientific insight to know which measurements to make. This difference in access threshold is not merely a matter of equity — it is a scientific productivity argument. More researchers approaching fundamental questions from more diverse cultural and intellectual backgrounds increases the probability that existing paradigms will be challenged from unexpected angles. The fact that the 200x Wiedemann-Franz violation was discovered in Bengaluru rather than Cambridge or Geneva is itself evidence that geographic and institutional diversification of physics research produces genuine scientific dividends. Every major condensed-matter laboratory in the world is now a potential contributor to tabletop cosmology questions — a research constituency of hundreds of institutions rather than the dozens with CERN affiliation, and a corresponding multiplication of the experimental approaches that can be brought to bear.

Concerns

- The $56,000-Per-Square-Meter Production Cost Barrier

Graphenea's commercial pricing data places the current cost of graphene production at approximately $56,000 per square meter — a figure that represents a fundamental obstacle to scaling any graphene-based technology from laboratory proof-of-concept to manufacturable product. While CVD film prices declined roughly 15% in early 2024, the pace of cost reduction under current production approaches would require well over a decade to reach price points compatible with mass-market deployment in electronics or medical devices. The ultraclean graphene required for the Dirac fluid phenomenon — sandwiched between NIMS-grade hBN and essentially free of impurity scattering — is substantially more difficult to produce than standard commercial-grade graphene, meaning even the $56,000 benchmark likely understates the cost of the specific material needed to replicate the IISc result at scale. The graphene market is projected to grow from $1.32 billion in 2024 to $2.98 billion in 2028, but that growth is concentrated in applications where graphene serves as an additive in composites or coatings — not as a precision electronic device component. The fundamental tension between materials push physics and market pull economics has characterized graphene's commercial trajectory for its entire 21-year history, and this discovery does nothing structurally to resolve it.

- Single-Source hBN Supply Risk and Research Fragility

The 200x Wiedemann-Franz violation was made possible by hexagonal boron nitride crystals of a purity grade that is, in practical terms, available only from one source: Kenji Watanabe and Takashi Taniguchi at NIMS in Tsukuba, Japan. A significant fraction of the world's highest-impact graphene research — including the 2018 magic-angle superconductivity papers from MIT — depends on this same supply chain. If retirement, health circumstances, or institutional friction interrupted this supply, the pipeline of ultraclean-graphene experiments would face severe delays across multiple research groups simultaneously. This is not a hypothetical concern: materials science has precedents of research programs stalling when a critical materials provider exits the field. Attempts by US national laboratories and European materials institutions to produce hBN of comparable purity have reportedly not yet closed the quality gap, meaning the redundancy that would make this supply chain robust simply does not exist yet. For a field whose most exciting results depend structurally on a single two-person team's materials output, this is a genuine systemic risk that the global physics community has been slow to address with the urgency it deserves.

- Competing Technologies Entrenched Market Position and Late-Mover Disadvantage

In the quantum sensor market that the IISc discovery theoretically addresses, graphene-based sensors face two well-resourced competitors with significant head starts. SQUID sensors have dominated medical-grade magnetic sensing for decades, with established clinical validation data, regulatory approval histories, and installed-base relationships with hospital purchasing departments. Diamond nitrogen-vacancy centers have crossed into commercial territory: QuantumDiamonds has a commercially available semiconductor inspection tool, and the NV center's room-temperature operation advantage removes the cryogenic barrier that makes SQUIDs impractical for broad deployment. A Nature Communications Engineering paper documents a graphene-diamond hybrid system improving NV center coherence time by approximately 2x — which suggests graphene's most realistic near-term quantum sensor application may be as an enhancing material for NV center devices, rather than as an independent competing platform. Displacing either SQUID or NV center technology requires graphene sensors to demonstrate clear and quantified superiority in at least one commercially relevant metric, and the current state of graphene sensor development — still research-stage, with no commercial prototype — places it years behind competitors who are already iterating on products in the market.

- The Law Broken Media Frame and Its Risk to Public Science Literacy

The headline 172-Year Physics Law Shattered is attention-capturing and factually misleading in a way that actively harms public understanding of how science works. The Wiedemann-Franz law was never a universal principle without conditions — it was derived for conventional metals in the Fermi liquid regime, and its deviation in graphene's quantum critical Dirac fluid regime was theoretically predictable. Violation is the technically accurate term within physics, but in public communication it implies that the law was simply wrong, encouraging the misunderstanding that physics laws are unreliable and subject to arbitrary revision. This is the opposite of the epistemically useful takeaway. When this framing is repeated across multiple major discoveries it reinforces a narrative in which scientific authority is fragile and laws are routinely overturned, which provides rhetorical ammunition for anti-science movements. The technically accurate framing — the law's domain of applicability has been more precisely characterized — is scientifically correct but generates less media interest, creating a structural incentive problem in science communication that this discovery illustrates clearly.

- Two Decades of Graphene Promise Without Consumer Products Remains a Valid Structural Critique

Graphene was first isolated in 2004 and immediately described as a revolutionary material that would transform electronics, energy storage, medicine, and structural materials. In 2026, twenty-two years later, a consumer walking into any store cannot purchase a product where graphene's unique properties — its quantum electronic characteristics, its extraordinary strength-to-weight ratio, its thermal conductivity — are the decisive functional element. The Dirac fluid discovery is genuinely important physics, but it does not change the underlying commercialization dynamics that have constrained graphene's market impact: production cost remains prohibitive, yield and quality control at scale remain problematic, and the applications where graphene's properties provide an insurmountable advantage over cheaper alternatives remain narrow. European graphene patent approval rates of 34% — compared to 59% in the United States — reflect structural intellectual property challenges in the field's most important regulatory market. The expectation-disappointment cycle in graphene investment has repeated approximately three times since 2010, each time triggered by a compelling physics result that generated investor interest followed by a multiyear wait for commercialization that did not arrive on predicted timelines. The 200x Wiedemann-Franz violation is the most compelling result yet — but that observation is not sufficient, on its own, to conclude that the fourth cycle will break the pattern.

Outlook

In the immediate term — the next six months — the physics community will attempt to replicate these results in earnest. That's how science works, and given that this paper landed in Nature Physics with a 200x headline, the interest will be intense. I'd expect a minimum of three to five research groups to announce similar experiments by the end of 2026. MIT, Columbia, and Manchester — the institutional heavyweights of graphene research — will be first in line. The critical constraint is the sample quality: without hBN crystals of the purity that Watanabe and Taniguchi supply from NIMS, reproducing the 200x violation is essentially impossible. Labs that have established supply relationships with NIMS hold a structural advantage that could determine the replication race entirely. Watch the citation count on this paper — if it crosses 100 within six months, that's a Clarivate "hot topic" signal, and grant funding will follow. The IISc group is already running follow-up experiments, and the progression from Harvard's 10x to IISc's 200x took nine years. With improved sample quality now accelerating globally, the next major milestone could arrive in three to five years rather than another decade.

On the policy front, the timing of this publication is almost suspiciously well-timed for India's science bureaucracy. The 57% year-on-year budget surge to 65,307 crore rupees arrives alongside a new R&D Innovation (RDI) program receiving 20,000 crore — serious investment by any global standard. The IISc Nature Physics result gives India's Department of Science and Technology (DST) a concrete, internationally recognized, prestigious success story to anchor its argument for continued and expanded investment. I'd put the probability above 60% that IISc announces the creation of a dedicated Dirac fluid research center, or a significant expansion of its nano-science center, by late 2026. The India-Japan collaboration axis is also likely to deepen — NIMS already holds MOUs with several Indian institutions, and elevating this relationship to a government-level joint basic science program is a politically attractive move for both nations. Clarivate data shows India's natural-science paper quality index rising steeply every year since 2020; this result lands at the inflection point of that trend, not as a lucky outlier.

The medium-term battleground — three to seven years out — is the quantum sensor market, and it is already intensely competitive. At roughly $479 million in 2026 and growing at 10-15% annually toward a projected $13 billion by 2034 and potentially $60 billion by 2040, the market is real and the stakes are high. Three technologies are in the race: SQUIDs carry decades of clinical validation and femtotesla-level sensitivity, but liquid helium is their structural Achilles' heel for mass deployment. Diamond nitrogen-vacancy centers have room-temperature operation as their trump card, and QuantumDiamonds' commercial semiconductor inspection product proves the technology has crossed the commercial threshold. Graphene sensors combine theoretical high sensitivity with flexible-substrate compatibility and no cryogenic requirement — but remain years from a commercial prototype. My most realistic scenario: graphene sensor prototypes emerge within two years, but commercial products are eight to twelve years away. Diamond NV centers will capture the early quantum sensor market. Graphene is more likely to enter as a hybrid material first — a Nature Communications Engineering paper already documented a graphene-diamond combination improving coherence time by roughly 2x — before potentially competing independently in the 2035-plus timeframe.

The other medium-term story is what I'd call the "tabletop cosmology" paradigm, and I think it's underappreciated. If graphene's Dirac fluid can serve as a mathematical proxy for quark-gluon plasma transport physics, then every condensed-matter physics laboratory with a quality graphene stack is effectively a low-cost particle physics experiment. CERN can study quark-gluon plasma only in microsecond bursts at 14 TeV collision energies, requiring 22 nations' combined funding. Graphene can maintain the Dirac fluid state stably across extended measurement windows in a room-sized laboratory. The theoretical framework linking these two systems is already well-developed — the gap has been experimental data, and that gap is now closing. I'd put the probability at roughly 40% that a paper appears in Nature or Science between 2027 and 2028 with a title along the lines of "Testing Black Hole Thermodynamics with Graphene." This would represent a genuine methodological revolution: fundamental cosmology and particle theory questions answered in tabletop experiments, democratizing access in a way that could benefit far more researchers than CERN-model physics ever has.

Zooming out to the long term — ten to twenty years — two trajectories run in parallel. The graphene market is projected to grow from $1.32 billion in 2024 to $2.98 billion in 2028 at a 22.1% CAGR, driven by battery technology, composites, and electronics. Quantum sensors broadly — including graphene-based designs — could reach $60 billion by 2040. If graphene Dirac fluid sensors achieve commercial viability in wearable medical applications — the scenario McKinsey identifies as achievable by 2030 for quantum biosensors — then "the discovery in Bengaluru changed what's on your wrist" becomes a plausible near-term historical sentence. Simultaneously, India's paper output trajectory suggests it could challenge the United States for second place in global research rankings within five years; during the Scopus 2020-2025 period it already ranked first in paper volume. The IISc-NIMS model — theory and measurement from one country, precision materials from another — is replicable across Asia. India-Korea, India-Taiwan, and India-Singapore pairings could constitute an Asian equivalent of the CERN multi-nation framework, but leaner, faster, and organized around bilateral comparative advantage rather than shared infrastructure.

Let me be direct about the scenarios and where I think I could be wrong. The bull case — probability around 20% — has graphene quantum sensor prototypes entering clinical medical trials within three years, tabletop cosmology becoming a mainstream methodology accepted by the particle physics community, and IISc ascending to the top five condensed-matter physics institutions worldwide. The quantum sensor market in this scenario hits the upper bound of McKinsey's 2035 projection near $100 billion, propelled by military, space, and wearable-medical demand.

The base case — probability around 55% — sees significant theoretical progress and successful replication, but commercialization pushed to the 2035-2040 window. Diamond NV centers dominate the early quantum sensor market, graphene enters as a hybrid component, and the IISc-NIMS axis deepens bilaterally without triggering a broader Asian research network immediately. The bear case — around 25% — involves replication difficulties, persistent hBN supply bottlenecks from the Watanabe-Taniguchi single-point-of-failure, and graphene's production cost ($56,000 per square meter) failing to decline structurally, leaving this discovery in the realm of beautiful physics with no commercial pathway. Graphene's reputation as "20 years of promising, minimal delivering" gets reinforced rather than redeemed, and European graphene patent approval rates (34%, versus 59% in the US) compound the problem. The two variables I'll be watching most closely: whether any lab outside NIMS's supply chain produces comparable hBN within two years, and whether China or the US fields a competing quantum sensor breakthrough that leapfrogs the graphene pathway entirely. Either of those events shifts the base case timeline by at least five years.

Sources / References

- Universality in quantum critical flow of charge and heat in ultraclean graphene — Nature Physics

- IISc official press release on Dirac fluid discovery — ScienceDaily / IISc

- Crossno et al. (2016) — First experimental observation of WF law violation in graphene — Science (AAAS)

- On the Wiedemann-Franz Law Violation in Graphene and Quark-Gluon Plasma Systems — arXiv / Cornell University

- McKinsey — Quantum Sensing Poised to Realize Immense Potential in Many Sectors — McKinsey

- Clarivate — India Research Performance Scorecard — Clarivate Analytics

- CERN 2025 Financial Statement — CERN

- Graphenea — Challenges and Opportunities in Graphene Commercialization — Graphenea