It Came Out Before It Went In — Toronto Scientists Clocked the Impossible Time

Summary

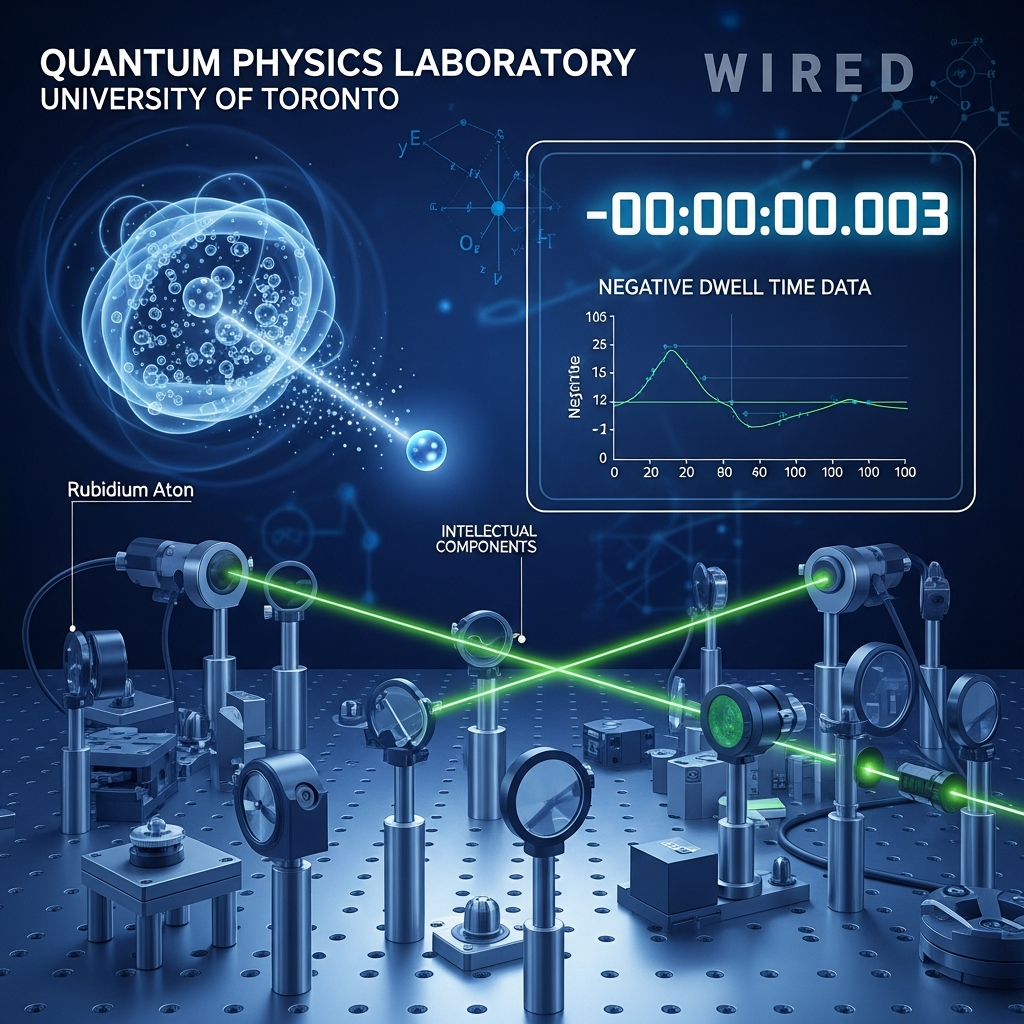

A research team at the University of Toronto fired single photons into a cloud of rubidium atoms and used the weak measurement technique to observe photon dwell time, recording a statistically significant negative (-) value published in Physical Review Letters in May 2026 (DOI: 10.1103/gjfq-k9dv). The experiment presents the first empirical evidence that time can take on negative values at the quantum scale, with the photon appearing — in classical interpretation — to exit the atomic cloud before it even entered. While classical physics has always treated time as a strictly positive, absolute measure, quantum mechanics has long lacked a formal time operator, treating time as an external background parameter rather than a dynamic observable of the system itself. This finding forces a rigorous reexamination of whether causality applies differently at quantum scales, whether time is an emergent macroscopic property rather than a fundamental constituent of reality, and how the interpretive frameworks of quantum mechanics must be revised in light of hard experimental evidence. Assessed against the long history of physics, this discovery joins the lineage of "uncomfortable data" — results that resist existing frameworks and ultimately compel the construction of entirely new physical language.

Key Points

The First Experiment to Confirm Negative Photon Dwell Time via Weak Measurement

Professor Aephraim Steinberg's team at the University of Toronto applied the weak measurement technique — originally proposed by Yakir Aharonov, David Albert, and Lev Vaidman in 1988 — to a cloud of rubidium atoms, successfully tracking the statistical dwell time of single photons with unprecedented experimental precision. Weak measurement is a technique for extracting information from a quantum system while disturbing it as little as possible: unlike strong measurement, which collapses the quantum state entirely, weak measurement barely perturbs the system and derives a meaningful result from the statistical behavior of a large ensemble of individual trials. The central finding of this experiment was that the photon dwell time — measured during the cycle of absorption and re-emission by rubidium atoms — came out not as zero or a small positive number, but as a clearly negative value that fell well outside the bounds of statistical uncertainty. In classical terms, this is as if the photon exited the atomic cloud before it even entered, a result that is incompatible with any standard physical treatment of time as a non-negative quantity. The theoretical contributions of co-authors Daniela Angulo and Howard Wiseman of Griffith University were essential to the experimental design and interpretive framework, and the results were formally published in Physical Review Letters after peer review (DOI: 10.1103/gjfq-k9dv). This experiment represents the culmination of roughly twenty years of incremental progress in weak measurement technology and opens a genuinely new frontier for studying the quantum nature of time empirically rather than purely theoretically.

Causality Holds — But Our Concept of Time Does Not

The most important clarification about this experiment is that it does not demonstrate a violation of causality in any operationally meaningful sense: no information traveled faster than light, no effect was observed before its cause in the information-theoretic sense that physicists care about, and the setup cannot be used to send signals backward through time. Negative dwell time is closely analogous to the well-known phenomenon of superluminal group velocity in optics — an effect that has been observed repeatedly in laboratory settings but does not allow faster-than-light information transfer because group velocity and signal velocity are distinct physical quantities that do not both bound the speed of information. What the experiment does challenge, far more fundamentally, is the assumption embedded in all of classical physics that time must always be non-negative — the idea that "elapsed time" is an absolute, scalar quantity that can never dip below zero under any physical circumstances whatsoever. If time can be measured as negative at the quantum scale, then time is not functioning as a universal, fundamental parameter in the way that classical physics assumes; instead, it may be an emergent property that only makes sense as a statistical average over enormous numbers of quantum events, much as temperature only has meaning for statistical ensembles and not for individual particles. The sensationalized "time travel" headlines that circulated in mainstream media following this publication represent a genuine failure of science communication, but dismissing the underlying experimental result as merely a statistical artifact would be an equal and opposite failure. The truth — that causality is intact but our concept of time is not — is actually more interesting than either misreading.

A New Chapter in the Thirty-Year Debate Over the Physical Reality of Weak Values

The physical reality of "weak values" — the outputs produced by weak measurement procedures — has been actively debated in the quantum foundations literature since Aharonov, Albert, and Vaidman proposed the concept in 1988, and this Toronto experiment has just added the most dramatic empirical data point yet to that ongoing conversation. Weak values can, by their mathematical construction, take on values that lie far outside the range of outcomes possible in ordinary quantum measurement — including negative values for quantities that are strictly positive in classical physics — and the question of whether this reflects genuine physical fact or sophisticated statistical artifact has never been conclusively settled in the community. This experiment makes the artifact interpretation significantly harder to sustain, because the negative dwell time is not a marginal or noise-level effect but a clean, reproducible statistical signal in an experiment that passed peer review at one of the most rigorous journals in physics. A further problem for the artifact interpretation is logical consistency: if weak values obtained in experiments on tunneling time, spin precession, and photon trajectories are accepted as physically meaningful — and they largely have been, across decades of community practice — then selectively declaring negative dwell time "unreal" requires a principled distinction that no proponent of the artifact view has clearly articulated. The ultimate arbiter of this debate will be the outcome of independent replication attempts; I expect at least two to three groups to publish independent results by the end of 2027, and if those results confirm the negative dwell time, the case for weak values as genuine physical realities rather than statistical shadows becomes very difficult to dispute.

New Experimental Constraints on Quantum Gravity Unification

The unification of quantum mechanics and general relativity remains the defining unsolved problem of modern theoretical physics, and the central obstacle to that unification is the concept of time itself. In general relativity, time is a dynamic geometrical component of spacetime — it stretches, curves, dilates with mass and energy, and is inseparable from space in the fabric of the universe. In quantum mechanics, by contrast, time is treated as a static external parameter that provides the coordinate against which quantum states evolve, but is not itself subject to quantum uncertainty or dynamical treatment within the theory. This fundamental mismatch has blocked serious progress on quantum gravity for decades, and the new experimental evidence that quantum dwell time can be negative provides, for the first time, a hard empirical constraint that any successful quantum gravity theory must be able to accommodate or explain. The two dominant frameworks — string theory and loop quantum gravity — treat time in fundamentally different ways, and experimental data showing that quantum-scale temporal behavior deviates from classical expectations creates a new discriminating test that theorists will need to address explicitly. Physicists such as Carlo Rovelli and Lee Smolin, who have long argued for emergent or relational conceptions of time in which time is not a fundamental given but an approximate description that emerges at large scales, will find in this result a concrete experimental anchor for positions that have until now been advanced primarily as theoretical arguments without direct empirical support.

Science Communication Under Pressure — The Risk of the "Time Travel" Narrative

This discovery is simultaneously one of the most exciting and most dangerous science communication scenarios that the physics community has faced in years. On the positive side, "time can be negative" is a phrase with immediate and universal appeal — you don't need a physics background to feel the weight of that claim — and the social media response after publication reportedly rivaled the 2012 Higgs boson announcement in virality, which is extraordinary for a paper in quantum foundations research. But the negative side is already unfolding: claims that the experiment "proves time travel," "violates causality," or shows "photons moving backward through time" have achieved wide circulation in mainstream media and social platforms, and none of these claims accurately reflects what the paper actually demonstrates. Once a wrong scientific narrative achieves viral spread, correcting it is nearly impossible — the cold fusion episode of 1989 is the canonical cautionary tale, where overblown initial reporting generated public excitement followed by devastating disappointment that produced lasting distrust of fusion energy research for decades. Steinberg's team took a responsible step by publishing accessible explanatory material alongside the technical paper, but the broader physics community needs to treat accurate public framing of this result as a collective priority, not an afterthought. The long-term stakes are real: accurate communication draws a generation toward physics and STEM education; inaccurate communication plants misconceptions that corrode public scientific literacy for years.

Positive & Negative Analysis

Positive Aspects

- The Revival of Quantum Foundations as a Legitimate Experimental Discipline

For decades, the foundational questions of quantum mechanics — the measurement problem, the interpretation debates, the physical reality of quantum superposition — were quietly sidelined in mainstream academic physics, dismissed as philosophy rather than physics. Funding agencies consistently favored quantum computing and quantum communication, where applications were tangible and development timelines were short; careers in foundational research were underfunded, risky, and often viewed with institutional skepticism. This Toronto experiment delivers a decisive counterargument: foundational questions are experimentally tractable, and doing a laboratory experiment on the quantum nature of time can produce results publishable in Physical Review Letters and capable of reshaping the entire field. The direct precedent is the 2022 Nobel Prize in Physics, awarded to John Clauser, Alain Aspect, and Anton Zeilinger for Bell inequality experiments that were once dismissed as mere philosophical exercises but ultimately proved both foundationally transformative and technologically essential for quantum information science. The renewed legitimacy of quantum foundations research as an empirical discipline will attract funding, attract talented researchers who previously chose safer applied areas, and create institutional momentum that has been missing from this corner of physics for too long.

- Indirect Advances in Quantum Computing and Quantum Sensing Technology

A negative dwell time result enriches our understanding of how quantum systems behave in time, with real-world implications for multiple areas of quantum technology. In quantum computing, the question of how long a qubit "spends" in a given state during a gate operation is directly relevant to error correction: if quantum temporal dynamics can be described more precisely — including behaviors that classical assumptions would categorically exclude — error correction protocols can potentially be made more efficient and more robust against decoherence. The three major industry players — Google, IBM, and Microsoft — collectively invest on the order of two billion dollars annually in quantum error correction research, and any conceptual breakthrough in how quantum temporal dynamics are understood could redirect a productive portion of that investment. In quantum sensing, refined weak measurement techniques are already pushing the frontier of what atomic clocks can achieve, and further precision improvements beyond the current 10⁻¹⁸ accuracy threshold would have cascading practical benefits for GPS navigation systems, financial market infrastructure, scientific measurement standards, and fundamental constants research. Basic science and practical technology have always been connected by a long, nonlinear chain, but history consistently shows that the most transformative technologies emerged from basic research that seemed purely theoretical at the time.

- A Catalyst for Public Scientific Engagement and STEM Recruitment

Perhaps the most underestimated benefit of a discovery like this one is its effect on the pipeline of future scientists. "Time can be negative" is one of those rare scientific findings that crosses from specialist journals into the broader cultural conversation — the kind of result that prompts a curious teenager to look up "quantum mechanics" at midnight and set off on a path that ends at a research laboratory. The viral response to this paper's publication reportedly rivaled the 2012 Higgs boson announcement in reach, which is genuinely extraordinary for a paper in quantum foundations research with no obvious immediate technological application. At a moment when STEM pipeline concerns are serious across many countries, a result this counterintuitive and this genuinely accessible provides a recruitment stimulus that no institutional advertising budget could replicate. Beyond the pipeline effect, this experiment offers a uniquely rich case study for undergraduate physics education: it can be used to teach weak measurement, quantum state evolution, the measurement problem, and the philosophical foundations of quantum mechanics all at once, through a result that will stop students in their tracks and demand that they think seriously about what they actually believe time is. The pedagogical value alone justifies the extensive coverage.

- A Trigger for Productive Interdisciplinary Research Across Physics, Philosophy, and Cognitive Science

If the quantum nature of time is genuinely different from what classical physics describes, the ripple effects extend well beyond physics departments and into fields that have always implicitly assumed time was a fixed, classical given. Philosophy of physics has long debated whether time is metaphysically real or an artifact of how human cognition organizes experience — a debate that has been conducted almost entirely in the absence of the kind of direct empirical data that this experiment begins to provide. Cognitive science and neuroscience study the human sense of temporal passage, including disorders of time perception, from a perspective that implicitly assumes time is a well-defined external parameter; if the physics of time is itself non-classical at the quantum scale, these disciplines may need to revisit foundational assumptions about what they are actually measuring. Computer science, particularly in the domains of causal reasoning, temporal logic, and the design of quantum programming languages, rests on assumptions about time that may need revision if quantum temporal behavior departs from the classical model in the ways this experiment suggests. The history of productive science is substantially a history of unexpected interdisciplinary cross-pollination — thermodynamics shaping information theory, neural biology shaping machine learning — and this discovery has precisely the structural profile to generate a new wave of such cross-disciplinary collaboration.

Concerns

- The Rapid Spread of the "Time Travel" Misinterpretation and Its Long-Term Damage

The most immediate and consequential risk from this discovery is the rapid viral propagation of a fundamentally wrong interpretation. Within hours of publication, mainstream media outlets and social media platforms were circulating claims that this experiment proved time travel was possible, that causality had been violated, and that photons were moving backward through time — none of which is what the paper reports, and all of which are the opposite of what the physics actually shows. This misinterpretation is not harmless excitement: it creates a public expectation that physics cannot meet, and when the "time travel revolution" fails to materialize, the resulting disillusionment generates real cynicism about scientific claims in general. The cold fusion episode of 1989 is the instructive historical precedent — overblown reporting of Fleischmann and Pons's claims created a wave of public excitement followed by devastating disappointment, and the residual distrust of fusion energy research persisted for decades, arguably delaying investment in what is now considered a genuinely promising technology. Once a false scientific narrative achieves wide circulation, correction is extraordinarily difficult — denials and clarifications rarely achieve anywhere near the reach of the original false claim, even when they come from the researchers themselves. The physics community must treat aggressive, early, accurate framing as a collective obligation, not an optional courtesy.

- Severe Technical Barriers to Replication and the Risk of Prolonged Uncertainty

Weak measurement experiments of the precision required to observe a clean negative dwell time signal are among the most technically demanding experiments in modern physics. The conditions that must be simultaneously maintained — the density and temperature of the rubidium atom cloud, the coherence properties of the single-photon source, the sensitivity and timing resolution of the photon detectors — represent a formidable engineering challenge that relatively few laboratory groups in the world are positioned to meet at the requisite level. The global count of laboratories capable of conducting this experiment at the necessary precision is estimated at fifteen to twenty, and the typical timeline for replication, even under favorable circumstances, runs six to twelve months. During that replication window, the result exists in a state of productive ambiguity that, while scientifically normal, generates genuine uncertainty for researchers and funders who would like to know whether the finding is robust before investing resources in follow-on work. Worse, if replication attempts encounter systematic differences from the original result — reflecting the extraordinary sensitivity of weak measurement to exact experimental conditions — the media will almost certainly frame this as the original result being "wrong," regardless of what the nuanced technical reality actually is.

- Deepening Fractures in Quantum Interpretation — From Productive Debate to Trench Warfare

The interpretation of quantum mechanics has been a source of genuine intellectual controversy for nearly a century, and there is a real risk that this result intensifies that controversy in ways that are more tribal than productive. The major interpretive camps — Copenhagen, many-worlds, de Broglie-Bohm pilot wave — will each attempt to absorb this result into their preferred framework, using it as confirmatory evidence for their position rather than as an empirical challenge that all frameworks must genuinely accommodate on equal terms. When scientific debates take on the character of inter-group competition rather than collaborative truth-seeking, they tend to generate publications written to score points against rival interpretations, conference sessions organized as debates rather than joint problem-solving, and a general atmosphere in which winning arguments displaces converging on truth. The history of quantum foundations contains cautionary examples — the intellectually generative Bohr-Einstein debates eventually gave way to periods where interpretation disputes became unproductive, empirically unresolvable standoffs that consumed significant community energy without advancing knowledge. With this dramatic new experimental result in play, there is a genuine opportunity for empirical evidence to begin constraining interpretation debates in ways that pure argument never could — but there is an equally genuine risk that entrenched camps will simply reabsorb the new evidence through their existing interpretive lens.

- Funding Concentration and the Distortion of Broader Physics Research Priorities

A high-profile experimental result in any area of science tends to attract disproportionate research funding, and there is a legitimate concern that this result will concentrate investment in negative-time and weak measurement research at the expense of other equally important but less visually spectacular foundational questions. Dark matter detection, dark energy characterization, neutrino mass measurement, and the search for physics beyond the Standard Model are all genuinely pressing foundational questions that require sustained, patient, multi-decade funding commitments that are inherently incompatible with responsiveness to the news cycle. The pattern of post-discovery funding distortion is well-documented: after the detection of gravitational waves in 2015, gravitational wave astronomy received a disproportionate share of new physics funding for several years, leaving some other observational astronomy programs relatively underfunded during a critical window. Funding allocation bodies — the NSF, ESA, STFC, and their international counterparts — carry a responsibility to maintain diversified portfolios of foundational physics investment and to resist the pressures that high-profile media coverage creates. The deeper risk is not just misallocation of resources for a few years but the compounding possibility that important foundational questions go chronically underresourced precisely during the windows when a breakthrough would otherwise have been within reach.

Outlook

In the near term — the next one to six months — the aftermath of this publication is going to be fascinating to watch. The immediate response from the physics community after the Physical Review Letters publication has been intense, and I expect to see at least ten follow-up theoretical papers appear on arXiv within the next three months alone. These will fall broadly into two camps: papers exploring the theoretical implications of negative dwell time for quantum foundations, and papers critically scrutinizing the experimental methodology. The physicists who have long been skeptical of weak value interpretations — Lev Vaidman and his school of thought, for instance — will have something pointed to say about whether this result vindicates or complicates their position. The debate over whether weak values reflect physical reality is going to get significantly louder in the coming months, and that debate will be sharper and more empirically grounded than it has ever been before.

Experimental replication attempts will also begin almost immediately. The number of research groups globally with the technical capability to perform weak measurement experiments at this level of precision is estimated at around fifteen to twenty. The most likely early movers are Anton Zeilinger's group at the University of Vienna — 2022 Nobel laureates in Physics for related Bell inequality work — and Howard Wiseman's own group at Griffith University in Australia, who were co-authors on the original paper and are therefore uniquely positioned to attempt independent verification with modified experimental designs. Standard timelines for replication in this area of experimental physics run six to twelve months, which means the first independent confirmation or refutation could arrive by late 2026 or early 2027. That outcome will be decisive: successful replication dramatically elevates the credibility of the finding, while failure to replicate shifts attention squarely toward systematic experimental error in the original setup.

In the medium term — roughly six months to two years out — I expect substantially deeper structural changes in how the research community organizes around these questions. My prediction is that there will be at least three or four major international conferences dedicated to the quantum nature of time within this window, with at least one making "The Quantum Nature of Time" its central organizing theme. These gatherings are not just academic rituals — they function as coordination mechanisms, setting the agenda for research programs that will determine where funding flows and where careers are built for the next decade. Given that the 2022 Nobel Prize for physics validated foundational quantum experiments of this type, I would not rule out this topic entering Nobel-level conversation again before 2030. The window for high-profile foundational results to receive that recognition appears to be opening.

The medium-term response from the quantum information science community will be critical to watch. Researchers working in quantum computing, quantum communication, and quantum sensing will be scrutinizing this result for practical leverage. If the dwell time of quantum states during gate operations can be understood through a new conceptual lens — including the possibility of non-classical temporal behavior — this opens potential new angles for quantum error correction. Google, IBM, and Microsoft are currently spending on the order of two billion dollars combined annually on quantum error correction; even a modest reconceptualization of quantum temporal dynamics could redirect research priorities in meaningful ways. In quantum sensing, continued refinement of weak measurement techniques are already pushing the frontier of what atomic clocks can achieve, and further precision improvements beyond the current 10⁻¹⁸ accuracy threshold would have cascading practical benefits for GPS navigation systems, financial market infrastructure, scientific measurement standards, and fundamental constants research. The connection between basic quantum time research and these technologies is indirect but historically consistent with how foundational breakthroughs eventually become engineering tools.

Looking further ahead — two to five years out — I want to make some genuinely ambitious forecasts. If negative dwell time is independently replicated and theoretically grounded in a robust new framework, this result could become decisive evidence for the view that time is a macroscopic emergent phenomenon rather than a fundamental constituent of reality. Physicists such as Carlo Rovelli and Lee Smolin, who have spent careers arguing for relational or emergent conceptions of time, will have a powerful new data point to build from. If this interpretive direction becomes the mainstream consensus, the implications cascade into physics pedagogy: the chapter on "time" in standard university quantum mechanics textbooks would need to be fundamentally rewritten for the first time since those textbooks were authored in the mid-twentieth century. The last time the physics curriculum was revised at this level of depth was when the Standard Model solidified in the 1970s, and that took decades of community-wide adjustment.

The implications for quantum gravity are equally significant over this timeframe. String theory and loop quantum gravity — the two dominant frameworks for unifying quantum mechanics with general relativity — treat time in fundamentally different ways. String theory embeds time as one coordinate axis of a higher-dimensional spacetime manifold; loop quantum gravity quantizes spacetime itself into discrete Planck-scale units in which both space and time emerge from a combinatorial structure. Experimental evidence that quantum dwell time can be negative creates a discriminating empirical constraint: any viable quantum gravity theory must be able to accommodate or explain this result. The global research budget for quantum gravity stands at roughly half a billion dollars annually, and this finding has the potential to increase that investment by thirty to fifty percent as the field gains both public visibility and a new empirical anchor that it has long been lacking.

Let me set out three explicit scenarios. In the optimistic scenario — the bull case — negative dwell time is confirmed by three or more independent experiments, a compelling new theoretical framework emerges to explain it, and the result provides decisive guidance for the quantum gravity unification program. I put the probability of this full outcome at around fifteen to twenty percent over a five-year horizon. In the baseline scenario — the one I assign fifty to sixty percent probability — replication succeeds but interpretive consensus does not follow quickly, leaving the result as a well-established experimental fact whose theoretical meaning remains openly contested for five years or more. This is the most common pattern in the history of physics: the experimental fact settles faster than the theoretical interpretation. In the pessimistic scenario — which I assign twenty to thirty percent probability — replication attempts surface systematic experimental errors in the original setup, and the negative dwell time is ultimately attributed to an artifact of measurement conditions rather than genuine quantum physics. Even in that case, the technical advances in weak measurement methodology required to reach this precision would remain valuable for the field.

The most important caveat to everything I've said concerns the pace of fundamental physics on questions this deep. The Bell inequality was proposed in 1964; the definitive loophole-free test came in 2015. If negative dwell time proves genuinely real and genuinely fundamental, integrating it into the formal language of physics may require not years but decades. What I am confident about is this: the question has now been made experimentally concrete in a way it simply was not before this paper. That is already enormously valuable, independent of how the subsequent debates resolve. The recommendation I would make to any intellectually curious reader is to follow Physical Review Letters and Nature Physics over the next two to three years, tracking the follow-up papers and replication attempts. You may be watching, in real time, the earliest stages of the next paradigm shift in how physics understands the most fundamental dimension of reality.

Sources / References

- Physicists Measure Negative Time in Lab — Phys.org

- Evidence of Negative Time Found in Quantum Physics Experiment — Scientific American

- Negative Time Measured in the Lab — Mapping Ignorance

- Scientists Discover Negative Time in Bizarre Quantum Experiment — ZME Science

- Negative Time Measured in Physics Experiment — Greek Reporter

- Quantum Experiment Measures Negative Time — Futurism

- Photons Can Spend Negative Time as Atomic Excitations — The Quantum Insider