Bigger Isn't Smarter: The 99% Energy Revolution That Just Broke AI's Cardinal Rule

Summary

Neuro-symbolic AI, developed by a Tufts University research team led by Timothy Duggan, Pierrick Lorang, and Matthias Scheutz, has achieved something the industry long insisted was impossible: cutting training energy by 99% and operational energy by 95% compared to standard Vision-Language-Action models — while posting higher accuracy. The preprint, posted to arXiv in February 2026 and set for official presentation at ICRA 2026 in Vienna this June, directly challenges a decade of scaling-law orthodoxy that spent hundreds of billions of dollars betting that bigger always means better. If the numbers hold up under independent replication, the implications stretch far beyond energy bills — into the structure of Big Tech's market dominance, global AI governance, and who gets to build the next generation of intelligent systems.

Key Points

99% / 95% — The Numbers That Erase a Trade-off

The Tufts University team led by Timothy Duggan, Pierrick Lorang, and Matthias Scheutz published a preprint to arXiv in March 2026 (arXiv:2602.19260) and had it accepted for ICRA 2026 in Vienna, demonstrating that a neuro-symbolic robot controller cuts training-phase energy by 99% and operational energy by 95% compared to a standard Vision-Language-Action model — while simultaneously improving accuracy. On the Tower of Hanoi task, the standard VLA model achieved a 34% success rate while the neuro-symbolic system hit 95%. On novel compositional tasks never seen during training, the VLA flatlined at 0% while neuro-symbolic maintained 78%. Training time collapsed from 36 hours to 34 minutes. For a decade, AI research operated on the premise that efficiency and capability exist in fundamental tension — that smaller means cheaper but worse, and larger means more capable but expensive. This result doesn't simply improve on that tradeoff. It erases it. When a system 100 times cheaper than the alternative also beats that alternative on every accuracy metric, the premise that justified hundreds of billions in GPU procurement doesn't just weaken — it fails structurally. That is a different kind of event from an incremental benchmark improvement.

Scaling Laws Were Never Laws — They Were a Decade-Long Industrial Habit

The 2020 Kaplan et al. paper and the 2022 Chinchilla paper from Hoffmann et al. formalized the observation that, for dense transformer architectures, increasing parameters, data, and compute on a log scale produces predictable log-scale decreases in loss. That relationship got elevated from a conditional finding to an industry creed — cited in investor presentations, CEO speeches, regulatory hearings, and government policy documents as the final justification for unlimited GPU spending. What almost never got quoted was the original paper's own caveat: these relationships hold under specific conditions. Six years of market enthusiasm buried that qualifier. The Tufts result forces it back into the open. Scaling laws worked impressively because the entire industry chose to optimize within the same narrow class of architectures — dense transformer families — and never seriously stress-tested whether different architecture families obeyed different scaling relationships. They do. The law was a feature of the monoculture, not of the physics. I'd argue this distinction — between an architectural artifact and a genuine natural law — will define the central investment debate in AI for the next five years, reshaping how every major participant allocates capital.

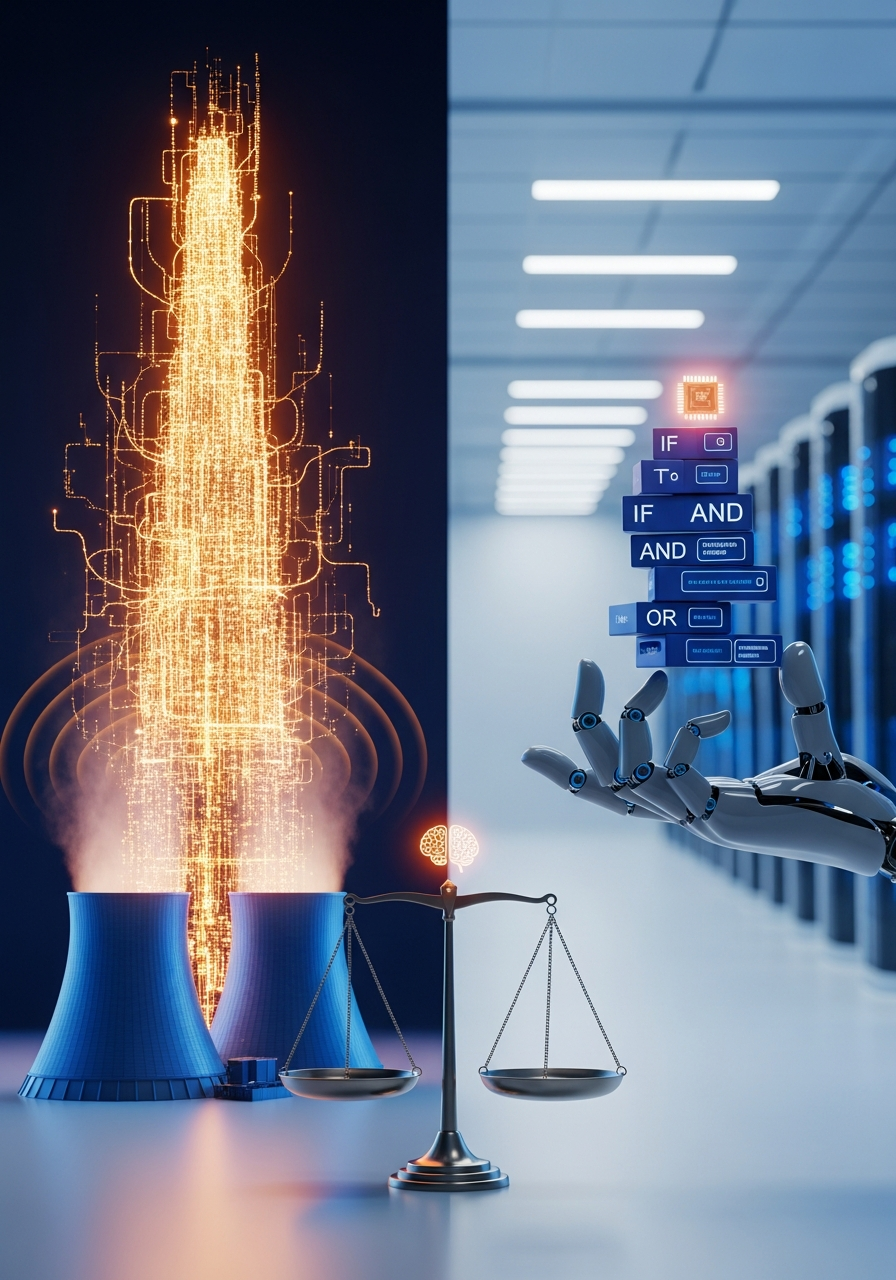

The Energy Crisis Is an Architecture Problem, Not a Supply Problem

The IEA's 2025 report projected global AI data center electricity demand rising from 415 TWh in 2024 to 945 TWh (consumption) / 1,000 TWh (production) by 2030 — roughly equivalent to Japan's entire national power consumption. Big Tech's response was to source more power: Microsoft's 20-year, $16 billion deal with Constellation Energy to restart Three Mile Island Unit 1 at 835 MW by 2028, Amazon's $20 billion partnership with Talen Energy for a 1,920 MW campus next to the Susquehanna nuclear plant, Google's SMR orders, and coal plant life extension approvals in multiple states. Over the past year, hyperscaler nuclear-related contracts in the United States alone exceeded 10 GW. The neuro-symbolic result reframes this entirely. If a substantial fraction of that projected demand growth is architectural waste generated by a poorly chosen compute paradigm rather than by the genuine demands of the tasks being run, then building more reactors to keep the lights on is roughly equivalent to fixing a leaking pipe by running the tap harder. The question that finally has empirical grounding behind it is: why are we using this much energy in the first place? That question changes the shape of every energy policy conversation touching AI.

Neuro-Symbolic's Return Is Not History Repeating — It's Structural Maturation

Symbolic AI has an obituary already written in the history books. The expert systems of the 1970s and 1980s generated enough commercial momentum to spawn a full industry, then collapsed when the brittleness of hand-coded rule sets became commercially intolerable. The 2012 deep learning revolution built its victory narrative on top of that failure. Current neuro-symbolic architecture is structurally different from what failed then, and the distinction matters enormously. Past failures were specifically about the impossibility of manually encoding all the rules needed for real-world knowledge breadth. Today's neuro-symbolic systems use neural networks for the pattern-learning layer and symbolic logic for verification, recombination, and explicit reasoning — fast intuition feeding into slow deliberation. That architecture is not a nostalgic return to expert systems. It's a closer approximation of how human cognition actually operates: the dual-system model that cognitive scientists have described for decades, where automatic pattern recognition and explicit logical reasoning run in parallel rather than in competition. Researchers including Velasquez and colleagues, writing in PNAS Nexus in 2025, described neuro-symbolic AI as a direct antithesis to scaling laws. By 2030, I'd expect the phrase 'pure neural network AI' to carry roughly the same archaic flavor as 'pure mainframe software.'

Robotics as First Proving Ground Predicts the Adoption Sequence

The fact that neuro-symbolic AI's first major empirical validation arrived at ICRA is not coincidental. Robotics imposes the harshest possible constraints on AI architectures simultaneously: strict latency limits — a robot arm cannot wait 500ms for a cloud inference round-trip — severe energy budgets, and high repeatability requirements where random failures carry real physical consequences. These are precisely the dimensions where large foundation models perform worst on edge hardware. Neuro-symbolic addresses all three simultaneously. The implication for deployment sequence is direct: autonomous vehicles, surgical robots, industrial cobots, wearable medical devices, and satellite onboard AI are the next commercial beachheads, because they face the same constraint profile as the robotic systems where the initial validation happened. The cloud hyperscalers face disruption from the edges inward, not from the center out. I'd estimate the inflection point at sometime around a 2028 earnings call where revenue growth trajectories in edge AI products visibly diverge from cloud AI services — no dramatic announcement, just a quiet tonal shift in how management discusses the business mix.

Positive & Negative Analysis

Positive Aspects

- AI Accessibility Becomes Structurally Democratic for the First Time

Neuro-symbolic architecture's 100x reduction in compute requirements restructures who can participate in frontier AI development. For the past decade, training at the frontier required hyperscaler-scale data center infrastructure — a capital barrier that effectively limited meaningful AI development to four or five American and Chinese companies, with everyone else consuming APIs as subscribers rather than creating as builders. A 100x cheaper training paradigm doesn't just make existing participants more efficient — it changes the composition of participants entirely. Mid-sized enterprises can train their own domain-specific models. University labs in developing economies can run meaningful training experiments without cloud compute grants from the very companies whose market dominance they might be studying. Healthcare and education applications in the global south can operate locally without dependency on foreign API access. True AI democratization has never been about open-weight downloads — it's been about absolute training cost coming down to a level accessible outside the hyperscaler tier. That threshold may be entering view for the first time, and the implications for who builds the next generation of AI systems are structural rather than incremental.

- Climate Budget and AI Progress Can Finally Coexist

Multiple climate research groups have estimated that, on the current trajectory, AI data center electricity demand alone could consume 3-4% of the global carbon budget by 2030 — a figure that directly contradicts the AI industry's narrative of AI-as-climate-solution and has already contributed to official data center permitting moratoriums in several regions. The 99% energy reduction result, if it holds at commercial scale, provides the first empirically grounded pathway out of that contradiction. It becomes theoretically viable to maintain or even accelerate the pace of AI capability development while cutting associated carbon output dramatically. The impasse between climate advocates and technology optimists over the past five years was rooted in a shared assumption that compute growth and energy consumption are hardcoded together — that you cannot have more capable AI without proportionally higher energy consumption. Neuro-symbolic architecture is the first tested counter-evidence to that assumption. The conversation can now be framed as an engineering problem rather than a political standoff, which fundamentally changes what solutions are even discussable.

- Explainability Becomes a Native Feature, Not an Afterthought

The EU AI Act and equivalent legislation emerging from the UK, Canada, and several Asian jurisdictions already legally require that high-risk AI systems be capable of explaining their decisions — not approximately, but causally. Pure neural networks are structurally ill-suited to satisfy that requirement. When billions of learned weights interact to produce a classification or recommendation, post-hoc interpretation tools like SHAP and LIME can only approximate the decision pathway; they cannot expose the actual causal chain. Neuro-symbolic architecture is different at the structural level: the symbolic reasoning layer operates through explicit rules that can be inspected, audited, and reported. 'This decision followed from Rule A and Condition B' is a sentence a neuro-symbolic system can genuinely produce rather than merely approximate. For deployment in medical diagnosis, credit approval, legal judgment, and public welfare allocation — domains where accountability is legally and ethically non-negotiable — that structural transparency converts from a nice-to-have into a decisive adoption advantage. For companies, it simultaneously reduces legal liability exposure and compliance overhead. For citizens, it makes the right to meaningful explanation real rather than rhetorical.

- Edge AI Finally Gets Its Native Architecture

The edge device market — autonomous vehicles, surgical robots, industrial cobots, wearable medical devices, agricultural drones, low-earth orbit satellite processors — has always been the domain where the large foundation model paradigm fits worst. Cloud inference round-trips can consume 500ms or more; physical systems operating in real time cannot absorb that latency. Battery constraints impose hard energy ceilings that dense transformer models consistently exceed. A 100x reduction in operational energy consumption doesn't represent a percentage-point improvement for these applications — it represents the difference between a product that works and a product that cannot be built. If the efficiency gains demonstrated in the Tufts benchmark carry into commercial edge deployment at even a fraction of their lab magnitude, the range of viable AI-powered edge products expands dramatically. Systems that previously required constant cloud connectivity can operate fully offline — in areas without network coverage, in disaster response scenarios, in medical facilities with strict data privacy requirements, in operational environments where connectivity is unavailable or compromised. Edge AI transitions from a constrained adjunct of cloud AI to an independent platform with its own capability ceiling.

- Big Tech's GPU Moat Stops Being a Moat

For the past decade, the moat around frontier AI has been GPU ownership and electricity access — two resources that only three to four hyperscalers could secure at meaningful scale, which is precisely why those companies effectively monopolized frontier model development. Neuro-symbolic architecture disrupts that resource equation at the foundation. When 100x less compute produces comparable or superior results, the bottleneck in AI research and development shifts from capital to ideas — from who can afford the most GPU hours to who can design the best architecture. That shift structurally reopens the frontier to smaller research teams, university laboratories, public research institutions, and domain-specific startups. The market structure that emerges from a cheaper architectural paradigm looks less like four dominant players selling similar models trained on similar data and more like a diverse ecosystem of hundreds of specialized models, each optimized for specific domains. When monopoly loosens, the geometry of innovation changes from linear to radial — more directions, more experiments, more diversity of outcomes, and ultimately more benefit distributed to consumers rather than concentrated in the shareholders of the companies that build these systems.

Concerns

- Hundreds of Billions in GPU Investment Risk Becoming Stranded Assets

NVIDIA's fiscal year 2025 total revenue reached $115.2 billion, with its data center segment generating $51.2 billion in Q3 FY2026 alone — a single-quarter record for any semiconductor company. The combined AI-related capital expenditure of the major four U.S. hyperscalers is estimated at approximately $690 billion for 2026, up from roughly $400 billion in 2025. NVIDIA's market share in AI data center GPUs remains above 92%. If neuro-symbolic architecture captures a meaningful fraction of AI workloads, a substantial portion of that investment gets retroactively reclassified as overcapacity. Stranded technology assets don't follow clean liquidation curves — their devaluation ripples through equity markets, corporate bond markets, pension fund portfolios, and AI-themed ETFs in ways that compound and accelerate. The historical parallel that surfaces most clearly is the telecom infrastructure overbuild ahead of the 2000 crash: capital poured at scale into capacity whose demand assumptions turned out to be wrong, followed by a correction whose severity surprised insiders who had approved the spending. The underlying technology was real. The overbuild was the problem. The structural logic maps closely to the current AI infrastructure situation, and the transition timeline should be measured in years rather than quarters.

- Generalization Beyond Structured Domains Is Still Unproven

The Tufts results emerged from a clearly bounded, well-structured domain: physical robotic manipulation tasks with defined success criteria and observable outcomes. Symbolic reasoning has historically performed well in structured environments and collapsed in environments where knowledge is tacit, language is ambiguous, and common sense is implicit — exactly the domains where large language models have made their most impressive gains. Neuro-symbolic proponents argue that the neural component of hybrid architectures handles that ambiguity layer, but the empirical evidence for that claim at scale is thin as of April 2026. No large-scale neuro-symbolic architecture has yet demonstrated the kind of open-ended language understanding, creative generation, or multi-turn conversational reasoning that GPT-4-class models produce routinely. Declaring that scaling laws are comprehensively finished based on one robotic manipulation benchmark carries the same epistemological risk as declaring deep learning finished in 2011 based on the limitations of early convolutional networks. The honest read of this situation is that it looks less like a paradigm replacement and more like the emergence of a genuinely new paradigm that will coexist with, rather than eliminate, the existing one.

- The Engineering Talent Pipeline Is Structurally Thin

Building an effective neuro-symbolic system requires simultaneous command of neural network architecture design, formal logic and automated reasoning, knowledge representation theory, program synthesis, and the domain-specific expertise of the application area. The AI labor market of the past decade has been optimized almost exclusively for deep learning skills. Engineers with serious hands-on experience in symbolic AI systems are disproportionately concentrated in the 50-and-above cohort — the generation that worked through the expert systems era firsthand. That talent concentration is not a minor inconvenience; it's a structural bottleneck that cannot be eliminated quickly. University AI programs have not reorganized their curricula around hybrid architectures, and curricular redesigns historically lag 3-5 years behind the technical transitions they're responding to. Companies eager to adopt neuro-symbolic approaches will face real implementation costs — extended development timelines, consulting fees, and quality shortfalls during the transition period — that partially offset the theoretical compute savings in the near term. The economic case for adoption is genuine; the frictionless implementation path that the benchmark numbers might suggest is not.

- Regulatory Frameworks Built for Compute-Scale Become Dangerously Misaligned

Global AI regulation as currently structured operates on the assumption that capability correlates positively with training compute: the more FLOP a system required to train, the more powerful and therefore more risk-laden it is presumed to be. The EU AI Act codifies this in Article 51, placing its systemic risk threshold at training compute exceeding 10^25 floating point operations. Neuro-symbolic architecture breaks that assumption in both directions simultaneously. A system demonstrating superior task performance with 100x less compute fits neatly below any compute-based regulatory threshold, regardless of its actual decision-making authority, deployment scope, or potential for harm. The resulting regulatory gap is not a minor loophole — it's a foundational misalignment between the risk model embedded in current legislation and the technical reality of what makes an AI system consequential. The period of regulatory adjustment creates a gray zone where systems of meaningful capability and autonomy operate without commensurate oversight. That gray zone will expand before it contracts, and the costs of incidents that occur within it will, as always in technology transitions, be distributed across society rather than concentrated in the companies that created the systems.

- The '100x' Headline Creates Dangerous Expectation Inflation

The 99% energy reduction figure is engineered by circumstance to be maximally legible and compelling in press coverage, investor pitches, and policy documents. That legibility creates a specific risk: capital will move toward neuro-symbolic applications at a pace that outstrips verified performance at commercial scale. Engineering history follows a consistent compression pattern: '100x in the lab' becomes '10x in the field' becomes '3x in production deployment' as operating scale increases, task complexity grows, and real-world environmental variability asserts itself. There is currently no evidence that the Tufts numbers will survive scale-up intact, and substantial reason from historical precedent to expect meaningful compression. The dual failure modes of premature capital concentration are well documented. First, when verified performance falls short of projected performance, the correction's magnitude is proportional to the gap between expectation and reality — meaning that the more aggressively the 100x figure drives investment, the steeper the eventual disappointment. Second, institutional underinvestment in existing infrastructure that occurs during transition periods can degrade service quality in ways that are diffuse, hard to attribute, and slow to reverse. I'd argue that expectation management is the single most consequential variable for the neuro-symbolic transition over the next two years.

Outlook

The most immediate signal to watch — in the next three to six months — is the replication race. Once ICRA 2026's official proceedings go live in June, independent research groups at DeepMind, Meta FAIR, the Allen Institute for AI, Tsinghua, and KAIST will begin porting the architecture to their own robot benchmarks. The quality of that replication effort will determine whether this result enters the permanent literature or gets quietly filed under "interesting but narrow." My read is that the core numbers — 99% training reduction, 95% operational reduction — will partially survive replication. Not fully. The lab-to-field compression in engineering history is relentless: "100x in the lab" becomes "10x in the field" becomes "3x in production." But partial survival still represents a fundamental challenge to the status quo, and I'd estimate there's a meaningful probability — I'd say around 70% — that an independent verification paper lands on arXiv before the end of July 2026, confirming at least a 70x efficiency gain under comparable conditions. That partial confirmation would be more than sufficient to trigger the second signal, and more than sufficient to make a serious conversation about architectural alternatives unavoidable. The second signal is the language change, and it's the one I'd watch most carefully because it's the one that moves money. Pay close attention to NVIDIA's next-generation GPU roadmap announcements and the Q2 2026 earnings calls for Google, Meta, OpenAI's parent, and Microsoft. In every technology transition I've observed closely, the industry's vocabulary shifts before its capital does — sometimes by six months, sometimes by eighteen. If "efficiency" starts replacing "scale" as the organizing frame in those calls — not as a constraint that limits ambition, but as a virtue that signals sophistication — then within six months you'll see formal capital expenditure reviews begin. The capex numbers make this consequential at a level most commentary underestimates. Big Tech's combined AI-related capital expenditure for 2026 is estimated at approximately $690 billion across the major four hyperscalers. NVIDIA's data center revenue for Q3 FY2026 alone hit $51.2 billion — out of $57 billion total quarterly revenue. These numbers don't pivot quietly, and even a 10% reallocation represents tens of billions of dollars moving to different destinations. The third near-term signal lives in the policy arena, and it's the one most likely to be underestimated by technically focused observers. The U.S. Department of Energy and the White House Office of Science and Technology Policy have been under sustained pressure to incorporate efficiency standards into data center permitting and AI system certification. If neuro-symbolic results give that pressure a specific empirical anchor — "here is a demonstrated architecture that reduces demand by 99% while improving accuracy" — the probability of efficiency requirements entering formal permitting criteria rises substantially. A coordinated EU-U.S. policy dialogue on efficiency thresholds for AI system classification would represent the first time AI governance infrastructure adapted with meaningful speed to a technical transition. That would itself be historically unusual, which is precisely why I'd assign it roughly 30% probability for meaningful progress within twelve months, rather than the higher probability a casual observer might assign. Over the one-to-two-year horizon, from late 2026 through 2028, the industrial geography of AI starts restructuring in ways currently underpriced in market estimates. Neuro-symbolic commercialization, as the ICRA result predicts, hits edge devices first: autonomous vehicle stacks, surgical robotics, industrial cobots, wearable medical devices, satellite onboard processors. The technology stack for a 2028 surgical robot looks materially different from a 2024 surgical robot not because the task changed but because the architecture enabling it changed. In that same window, the GPU supply-demand picture gets complicated from another direction. Current outstanding orders for H200, B200, and MI300-class next-generation accelerators from the major hyperscalers already exceed projected global AI compute demand through 2027 before any neuro-symbolic demand reduction is factored in. Layering a genuine architectural alternative on top of that calculus points toward structural GPU oversupply emerging by late 2027. NVIDIA's current trading premium — historically elevated relative to semiconductor sector peers — assumes a demand trajectory that the neuro-symbolic result, if it replicates, begins to undermine. I'd put meaningful probability on a valuation recalibration in the AI semiconductor sector sometime in the second half of 2027. Not structural collapse, more like the market finally updating a prior it held with too much confidence for too long — but that process rarely happens in orderly fashion. Here's another wild one, for the three-to-five-year picture, because this is where I think the consequences get genuinely underappreciated even by people paying close attention. The dominant paradigm of the current AI moment is the "foundation model" concept: one enormous, generalist model trained on essentially everything, then adapted via fine-tuning or prompting to specific tasks. GPT-4, Claude, Gemini — these are all instantiations of that design philosophy. The entire API economy, the entire inference cloud infrastructure, the entire enterprise AI procurement market is currently structured around that paradigm. What neuro-symbolic architecture technically justifies, at an economic level, is an entirely different design philosophy: domain-specific small models that each do one thing exceptionally well, trained cheaply enough that organizations outside the hyperscaler tier can build and maintain them independently. "One model to rule them all" gives way to "hundreds of specialist models operating in federation." The infrastructure implications alone are significant. The business model implications for companies currently charging API access fees for foundation model inference are more significant still. The developer ecosystem implications for the millions of engineers who spent the last five years learning prompt engineering as a primary skill are more significant than that. I want to be careful not to overclaim the speed here, because the historical track record of technology transitions is worth sitting with. The transition from mainframe to distributed computing took roughly fifteen years from technical proof to organizational reality. The transition from on-premises software to cloud SaaS took a similar period. There's no reason to expect AI architecture transitions to be faster, and some structural reasons — regulatory uncertainty, enterprise procurement cycle length, talent pool shallowness in neuro-symbolic methods, and the genuine commercial value of existing foundation model infrastructure — to expect them to be slower. But the direction of travel seems increasingly clear, and 2029-2031 is the window I'd start watching for the foundation model paradigm to show its first meaningful contraction in market share, not just in narrative. The scenario analysis I'd assign current probabilities to looks like this. In the optimistic scenario, independent replication confirms the core results within six months at 70-80% efficiency retention, regulatory bodies in both the EU and United States begin incorporating efficiency metrics into AI system classification within 18 months, and neuro-symbolic commercial deployment in edge devices reaches meaningful market penetration by 2028. Under this scenario, global AI data center electricity demand growth slows enough that actual 2030 consumption comes in meaningfully below the IEA's 945 TWh baseline projection, and the market for frontier AI broadens enough that mid-sized enterprises and developing-economy research institutions begin genuine participation at the training level. IEA's 2030 baseline of 945 TWh (consumption) holds approximately. The diversity of the AI product ecosystem increases substantially, and more of the economic value generated by AI distributes to users rather than concentrating in platform owners. I'd put this scenario's probability at around 25%. The binding constraint is organizational velocity — both the replication pace of the scientific community and the regulatory adaptation speed of government institutions are historically much slower than the technology they're chasing. The base scenario, which I'd assign roughly 55% probability, plays out as a gradual transition rather than a revolution. Neuro-symbolic takes the edge AI market over three to four years, cloud LLMs remain the primary product for creative and complex reasoning tasks through at least 2030, and the two paradigms coexist in separate market segments rather than one displacing the other. NVIDIA's dominance continues but its price-to-earnings premium compresses 20-30%. A cohort of neuro-symbolic startups — perhaps 30-50 companies — successfully carve out domain-specific niches in healthcare, manufacturing, legal processing, and logistics, creating a genuinely more competitive middle tier in the AI market. Hyperscalers respond with hybrid products that bolt neuro-symbolic capabilities onto existing foundation model infrastructure, capturing much of the efficiency gain within their existing product lines and preventing radical disruption to their business models. This is the most likely shape for how paradigm transitions actually unfold in mature industries: not sudden replacement but slow stratification, where the new approach fills in the spaces the old approach left empty rather than demolishing the old approach outright. The pessimistic scenario, which I'd assign 20% probability, is one where the Tufts results prove narrowly domain-specific upon extended replication. The 99% efficiency figure holds for structured robotic manipulation but degrades substantially — below 10x — in open-domain language understanding, creative tasks, and multi-turn conversational reasoning, where the ambiguity and implicit common sense that historically defeated symbolic approaches reassert their difficulty. Under this scenario, the scaling-law paradigm resumes after a brief period of doubt, AI electricity demand continues climbing well beyond the IEA's 945 TWh baseline by 2030, and the neuro-symbolic investment wave of 2026-2027 deflates into a category-scale bubble whose collapse takes 30-40% of the nascent neuro-symbolic startup cohort with it. The probability isn't negligible, and I'd assign it specifically because AI history has already produced two prior episodes of symbolic AI enthusiasm followed by painful disillusionment — in the early 1970s and again in the late 1980s. History doesn't repeat exactly, but it has a long enough memory to matter. Under this scenario, the climate pressure on the AI industry intensifies rather than abates, the geopolitical competition in foundation model development between the United States and China continues on the same capital-intensive trajectory, and the regulatory frameworks designed around compute thresholds remain the operative standard into the early 2030s without significant revision. The opportunity that the Tufts result represents would not disappear — it would simply remain an academic curiosity for longer than the optimists in the room today expect. The three signals I'll be watching with the most attention over the next twelve months are simple to state and hard to fake. First: does an independent replication paper appear on arXiv before August 2026, and does it confirm at least 70% of the core efficiency numbers? Second: does the language in Q3 and Q4 2026 Big Tech earnings calls shift from "scale" to "efficiency" as the primary organizing frame for AI capital deployment? Third: does the EU or U.S. government open formal public comment on revising the compute-based thresholds that currently define high-risk AI systems? If two of the three signals are positive, I'll revise my optimistic scenario probability upward from 25% to roughly 40%. If all three are negative, I'll push the base scenario probability above 60% and extend my timeline for meaningful paradigm transition by twelve to eighteen months. One more thing worth saying directly. This neuro-symbolic story is not only about efficiency. It's also about what happens when an entire industry elevates a conditional hypothesis to the status of a natural law and then spends six years of capital deployment on the assumption that the hypothesis is unchallengeable. The most dangerous moment in any technology cycle isn't the bubble — it's the period when everyone inside agrees on the premise and the social cost of questioning it exceeds the epistemic cost of not questioning it. The 99% number is the result of one lab study on one class of robotic tasks. But it is also, formally, the first empirical counterexample to arrive with enough precision and real-world grounding to force the question back onto the table. The next 18 to 24 months will determine whether the industry treats that counterexample as a healthy correction or as a crisis requiring defensive posturing. I'm betting on correction — gradual, incomplete, and slower than the optimists would prefer, but real. And I've pre-committed to three specific signals that would change that bet, because if you don't set your priors before the noise starts, you'll mistake the noise for the signal every time. This take is the AI's free-form thought experiment. Please season with a grain of salt.

Sources / References

- A Neurosymbolic Approach to Natural-Language Driven Manipulation with Vision-Language-Action Models (Duggan, Lorang, Scheutz et al.; ICRA 2026 accepted) — arXiv (Tufts preprint)

- New AI Models Could Slash Energy Use While Dramatically Improving Performance — Tufts Now

- AI breakthrough cuts energy use by 100x while boosting accuracy — ScienceDaily

- Neurosymbolic AI as an antithesis to scaling laws (Velasquez et al.) — PNAS Nexus

- Scaling Laws for Neural Language Models — arXiv (Kaplan et al.)

- Training Compute-Optimal Large Language Models — arXiv (Hoffmann et al., Chinchilla)

- Energy and AI (2024 baseline 415 TWh; 2030 projection 945 TWh consumption / 1,000 TWh production) — International Energy Agency

- NVIDIA Announces Financial Results for Third Quarter Fiscal 2026 (Q3 FY26 revenue $57.0B; Data Center $51.2B) — NVIDIA Newsroom

- EU AI Act Article 51 — systemic risk threshold for general-purpose AI models at training compute >= 10^25 FLOP — European Commission — Digital Strategy

- Microsoft signs 20-year Three Mile Island PPA with Constellation; Unit 1 targeted to restart by 2028 (835 MW) — Data Center Dynamics