Australia Kicked Everyone Under 16 Off Instagram — But Are Kids Actually Safer Now?

Summary

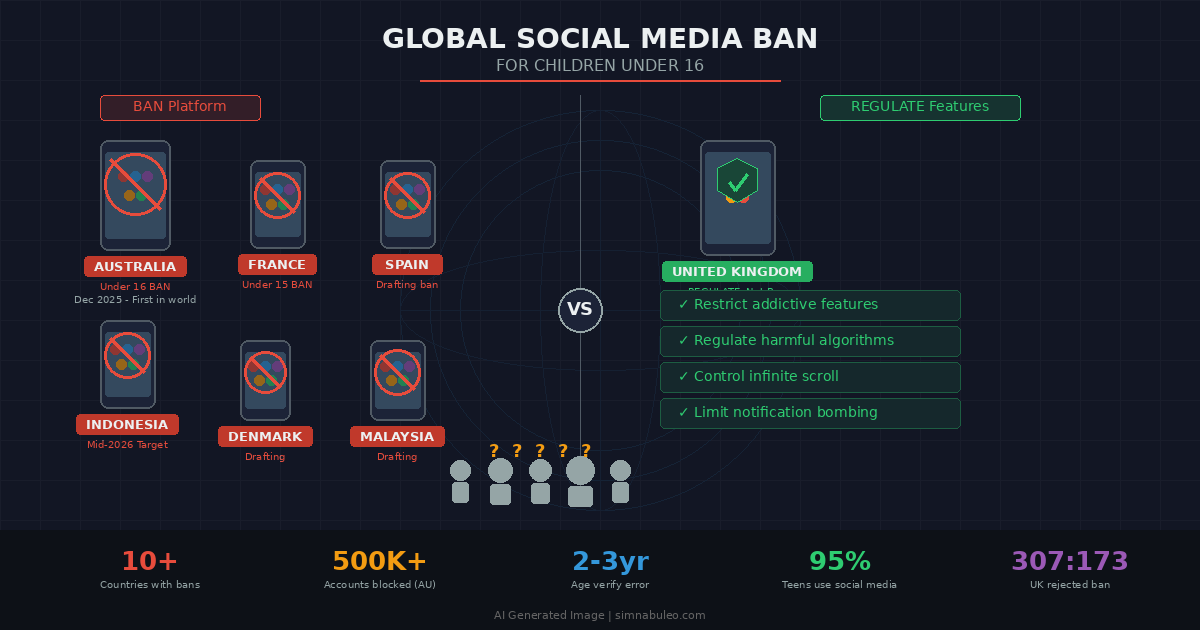

From Australia to the world, governments are banning children from social media — but the UK chose the opposite path

Key Points

Australia became the first country to ban social media for children under 16 in December 2025, with France, Indonesia, Spain, and 10+ countries following suit

The UK House of Commons rejected the ban 307-to-173, opting instead to regulate specific addictive platform features

Meta's facial age estimation technology shows a 2-3 year margin of error, identifying 11-year-olds as 30 and blocking legitimate users

Meta blocked over 500,000 underage accounts in Australia in the first month, but many teens have already found workarounds

Bans may create a water balloon effect, pushing children to unregulated platforms and dark-web-adjacent services with zero safety features

Biometric data collection for age verification poses new privacy threats, with Reddit filing a constitutional challenge in Australia

By 2027, over 70% of major developed nations are predicted to adopt selective harmful feature regulation rather than blanket bans

Age verification infrastructure built for social media bans could spread to gaming, streaming, and e-commerce, leading to an age-stratified internet

Positive & Negative Analysis

Positive Aspects

- Elevated Big Tech Accountability

After Australia's ban, Meta blocked 500K+ underage accounts and invested in age verification systems previously considered unthinkable. For the first time, companies are treating child protection as a top priority rather than just preaching self-regulation.

- Practical Tool for Parents

The legal ban provides parents with a clear starting point for discussing digital device usage with children. Australian schools report decreased smartphone-related conflicts since the ban took effect, showing the educational value of setting social baselines.

- International Standards Formation

Multiple countries testing different approaches simultaneously enables evidence-based policy development for children's digital rights. Combined with the EU AI Act, a comprehensive global framework for child online protection is taking shape.

- Youth Mental Health Improvement Potential

If bans and feature regulations work together effectively, meaningful improvements in youth mental health indicators could emerge by 2029. Child safety certification could become a new marketing tool for companies rebuilding parental trust.

Concerns

- Water Balloon Effect and Circumvention

Within two months of Australia's ban, many teens found workarounds. Children kicked off regulated platforms may migrate to small messenger services or dark-web-adjacent platforms with zero safety features, making the situation worse.

- Privacy Invasion Risk

Selfie-based facial recognition, government ID linkage, and biometric data collection are building surveillance infrastructure under the banner of child protection. A hack could leak identity information for tens of millions of children.

- Deepening Digital Inequality

Tech-savvy families easily circumvent bans via VPNs while less privileged children are excluded from the digital world. As social media serves as a channel for education and information access, bans could widen the digital divide.

- Marginalized Youth Isolation

For LGBTQ+ youth and marginalized teenagers, online communities may be their only lifeline for safe information and connection. Bans shut down these positive functions along with everything harmful.

- Technical Limitations Exposed

Meta's age estimation identifies 11-year-olds as 30 and blocks legitimate 16-year-old users. With a 2-3 year error margin, the technological feasibility of enforcement faces serious questions.

Outlook

In the immediate months ahead, this battlefield is going to get much hotter. France has committed to implementing its under-15 ban before the September school year, making summer 2026 a period of intense debate over implementation specifics across Europe. Indonesia is also targeting mid-2026 for legislation, potentially becoming the first large-scale Asian implementation. The key thing to watch is Australia's early data — the Australian eSafety Commissioner's six-month report, due in June 2026, will be a critical inflection point.

I predict this report will reach substantially skeptical conclusions about the ban's effectiveness. Given the technical limitations already exposed — facial recognition's two-to-three-year margin of error, widespread circumvention, migration to unregulated platforms — there's a strong probability the finding will be the ban was implemented, but the results are unclear. Reddit's constitutional challenge in Australia's High Court should also reach a decision in the second half of 2026, and if the court rules against the law, it would send a chilling signal to every country pursuing similar bans.

Looking one to two years out, the industry landscape will undergo major restructuring. The most likely scenario is a shift from total bans to feature-specific regulation. The UK has already chosen this path, and the EU is also moving toward individually regulating addictive platform design features — infinite scroll, autoplay, notification bombardment — in conjunction with its August 2026 AI Act implementation. My prediction is that by 2027, more than 70% of major developed nations will adopt a selective harmful feature regulation model rather than age-based blanket prohibition.

As this transition unfolds, Big Tech companies will inevitably adjust their strategies. Meta has already introduced youth-specific protected accounts on Instagram, and TikTok has made its 60-minute usage limit a default setting. By around 2027, we will likely see the emergence of an entirely new market category: children's social media. Just as Netflix separated kids profiles, social media will probably evolve into a dual structure with adult and children's versions.

Looking three to five years out, this debate expands into much more fundamental questions. What should the digital age of majority be? Currently, different countries use 15, 16, and 18, but by 2028-2029, discussions around an internationally unified digital age standard will likely emerge. The UN Committee on the Rights of the Child has already issued General Comment No. 25 on children's rights in the digital environment.

In the bull case scenario, Australia and France's experiments produce positive data and tech companies actively cooperate, leading to significant improvements in youth mental health indicators by 2029. The base case sees most ban laws implemented with limited effectiveness, countries converging on the UK model of feature-specific regulation. By 2028, major nations pass Harmful Algorithm Prohibition Act legislation, and the social media industry experiences approximately 15-20% revenue decline.

The bear case is genuinely worrying. Bans proliferate but technical enforcement fails, children migrate to far more dangerous platforms, and a biometric database gets hacked leaking identity information for tens of millions of children. The cause of child protection itself loses public credibility.

There are cascading effects being overlooked. Once age verification infrastructure is in place, it will likely spread to online gaming, streaming, and e-commerce, creating an age-stratified internet that clashes with foundational principles of openness and anonymity. Education systems will also need fundamental redesign, shifting from how to safely use social media to how to navigate the digital world without it. Finland has already made media literacy mandatory since 2025, and education may ultimately beat prohibition — though these effects take a generation to materialize.

Sources / References

- Australia's social media ban is a high-stakes experiment

- 2 months in, how are Australia's age restrictions for social media working?

- Meta urges Australia to rethink under-16 social media ban after blocking over 500,000 accounts

- These are the countries moving to ban social media for children

- Social media bans for minors - what is the global picture?

- UK Parliament Votes Down Social Media Ban for Children

- Social Media and Youth Mental Health: The U.S. Surgeon General's Advisory