AI Is Switching Off Students' Brains — Why Thinking Skills Are Dying in Classrooms Where 92% Use AI

Summary

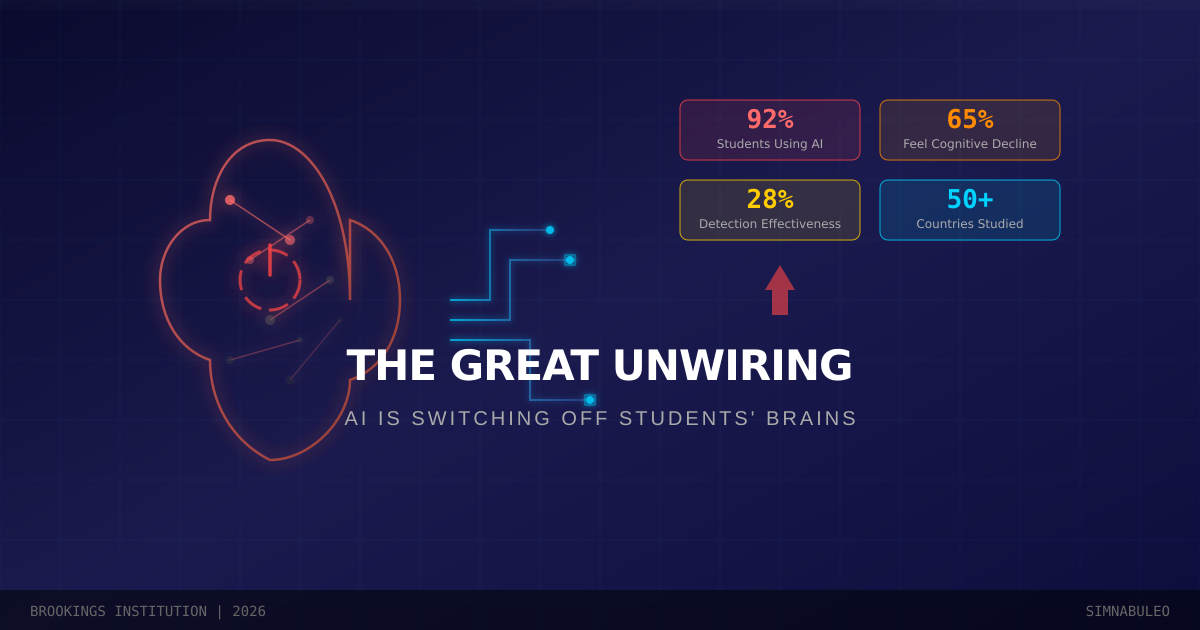

A Brookings report spanning 50 countries and 500+ experts warns: in classrooms where 92% of students use AI, grades are rising while thinking skills are collapsing. Detection is failing, bans are unenforceable, and the cognitive doom loop only deepens.

Key Points

The Cognitive Doom Loop — AI Dependency's Vicious Cycle

The Brookings Institution identified a "cognitive doom loop" where students delegate tasks to AI, see grades improve, delegate more, and experience progressive atrophy of thinking skills. Sixty-five percent of students reported sensing their own cognitive decline, while teachers describe "digitally induced amnesia" — students unable to recall the content of assignments they submitted because it never entered their minds. This vicious cycle is not a matter of individual willpower but a structural design flaw in the educational system itself.

Passenger Mode Goes Viral — Zombie Learning in the Classroom

Brookings researchers coined "passenger mode" for students physically present in classrooms but effectively disengaged from learning, doing only the bare minimum. ASU's analysis found that 45% of points in in-person biology courses could be earned through AI cheating, proving that even face-to-face instruction is not safe. With 92% of students using AI and 88% deploying it on graded work, these statistics demonstrate that this is not deviant behavior by a few but a universal phenomenon transforming the fundamental nature of education.

Detection's Failure and the Birth of New Discrimination

The effectiveness of AI cheating detection is abysmal — traditional plagiarism policies score 49% effective, AI-specific policies just 28%. More critically, detection tools produce disproportionately high false positives for non-native English speakers and neurodivergent students. Students are now using "humanizer" AI services to evade detection, escalating an arms race that institutions are already losing.

Grade Inflation Meets Competence Deflation — The Paradox

The most unsettling paradox of the AI education crisis is that grades are rising while actual competence falls. AI-compatible assignments show the largest grade improvements among bottom-quartile students, but this reflects increased AI dependency, not learning gains. If report cards are measuring AI's ability rather than students', the entire education system is engaged in self-deception.

The Cognitive Divide — The Next Evolution of the Digital Divide

OECD data shows South Korean students rank among the world's best academically, yet only 25% can distinguish fact from opinion versus the 47% OECD average. As AI dependency grows, wealthy families will access "AI-free" premium education while general students are handed to AI tutors, hardening a dual structure where the digital divide evolves into a "cognitive divide."

Positive & Negative Analysis

Positive Aspects

- Unprecedented potential for personalized learning

AI can adjust educational content in real time to match each student's pace, level, and style. A student in a rural developing-country village accessing MIT-quality tutoring is unprecedented in human educational history. The Brookings report itself advocates not for blanket bans but for using AI as a "mirror for thinking," enabling students to examine logical weaknesses, compare approaches, and expand their reasoning.

- Democratization of educational access

Hundreds of millions worldwide lack access to quality education. AI tutors are available 24/7, free of charge, with multilingual support and accessibility features for students with visual or hearing impairments. The potential to demolish geographic and economic barriers exceeds any previous technology, with platforms like Khan Academy's Khanmigo already serving millions.

- Reducing teachers' administrative burden

AI can automate repetitive tasks like grading, attendance tracking, and individual feedback generation, freeing teachers to focus on actual education — dialogue with students, critical thinking guidance, and emotional support. Teacher burnout is a global crisis, and if AI can absorb part of that burden, it opens the door to fundamental improvement in educational quality.

- Catalyst for rethinking educational paradigms

Paradoxically, the AI crisis is forcing the fundamental question "What is education for?" Ohio's K-12 AI policy mandate, renewed interest in Finland's project-based learning, and the global discourse sparked by the Brookings report are shaking educational systems that had been running on decades of inertia.

Concerns

- Structural impossibility of breaking the cognitive doom loop

The cycle of AI dependency leading to grade improvement leading to greater dependency leading to cognitive decline cannot be broken by individual willpower alone. With 65% of students sensing their own cognitive decline yet unable to stop, the mechanism resembles addiction. As long as education systems operate on grade-based metrics, there is zero incentive to reduce AI usage that demonstrably raises scores.

- Structural limitations of detection tools and deepening discrimination

With AI-specific plagiarism detection rated just 28% effective and producing higher false positive rates for non-native speakers and neurodivergent students, the tools are fatally flawed. Students are countering with AI humanizer services, escalating an arms race. Strengthening detection concentrates harm on the most vulnerable populations.

- Potentially irreversible developmental cognitive damage

The developing brain operates on neuroplasticity principles, strengthening frequently used neural pathways and pruning unused ones. A generation that outsources critical thinking, problem-solving, and logical reasoning to AI may never strengthen those pathways. The Brookings report's "Great Unwiring" framing strongly suggests the damage could be permanent.

- A new dimension of inequality — the cognitive divide

If wealthy families access "AI-free" premium education while average households receive AI-dependent public schooling, the existing digital divide evolves into a cognitive divide. The pattern of Silicon Valley CEOs sending children to screen-free schools is already documented and will likely repeat in the AI education space.

- Long-term erosion of democratic foundations

A generation with critical thinking deficits entering the electorate means weakened capacity to identify misinformation, diminished comprehension of complex policy, and uncritical acceptance of AI-generated content. If the Great Unwiring becomes generational, it transcends education, becoming a civilizational crisis that erodes the very foundations of democratic governance and civil society.

Outlook

In the short term, over the next six months to a year, this crisis will deepen before it improves. The release of GPT-5-class models will make AI's ability to circumvent detection even more sophisticated, accelerating student dependency. With AI detection tools currently rated at just 28% effectiveness, more powerful AI models could push that figure below 10%. At least ten additional U.S. states are expected to follow Ohio's lead in mandating K-12 AI policies, but translating policy into enforceable classroom practice takes time. The second half of 2026 will likely see a wave of national AI education guidelines from OECD member countries, and the EU is set to finalize its AI Act education-specific provisions by the end of 2026. South Korea's Ministry of Education has placed "AI Tier-3 nation" status as a core 2026 policy goal, but the focus remains on how to bring AI into classrooms rather than how to protect students' thinking capacity. The OECD finding that only 25% of Korean 15-year-olds can distinguish fact from opinion (versus the 47% OECD average) is likely to worsen in the AI era, as the inertia of test-focused cramming culture blocks the transition to critical thinking education. The most realistic near-term scenario is that the arms race between cheating and detection will drain institutional resources, leaving no energy for the actual educational innovation that is desperately needed. U.S. educational institutions are estimated to spend roughly $500 million per year on AI detection software as of 2025 — money that could have gone to teacher development and curriculum innovation. Another short-term phenomenon to watch is the emergence of "two-speed education": a widening performance gap between schools that proactively establish AI policies and those that stand by, which could fundamentally alter how parents choose schools.

In the medium term, one to three years out, a full-blown paradigm split in education will emerge. Elite institutions in developed countries will likely package "AI-free" education as a premium product. The pattern of Silicon Valley tech CEOs sending their children to screen-free schools has already been documented, and this dynamic will repeat itself in the AI education space. According to the Wall Street Journal, Waldorf School waiting lists have grown by 40% since 2024, and demand for such "tech-free education" will surge as the AI crisis deepens. Wealthy families will pay for "one-on-one Socratic dialogue with human tutors" to develop their children's thinking skills, while public school students are handed off to AI tutors — a dual structure that crystallizes a new dimension of educational inequality. The digital divide will evolve into a "cognitive divide." The Brookings report's proposed "context-dependent AI integration" policies may become mainstream in this timeframe, but given the reality of teacher capacity development — particularly in the U.S., where the Department of Education has lost roughly 50% of its staff through DOGE restructuring, severely impairing its policy-making and enforcement capabilities — the gap between policy and practice will be substantial. Another medium-term shift to watch is the fundamental restructuring of university admissions and hiring markets. As standardized tests like the SAT and GRE lose credibility in the AI era, "AI-cheat-proof" assessment methods — oral examinations, project portfolios, live problem-solving evaluations — will rise. Some universities have already begun introducing "AI-free exams" (handwritten essays under supervision), and this trend will accelerate. The labor market is shifting too: Big Tech companies like Google and Meta are increasing the weight of "live coding" and "whiteboard problem-solving" in hiring to verify the ability to think without AI. In the medium term, "certified ability to think without AI" could become a new premium qualification.

Looking three to five years ahead, the bull case envisions the emergence of a symbiotic model between AI and human thinking. Finnish-style educational innovation — project-based learning, collaborative assignments, critical thinking-centered curricula — evolves for the AI age, producing an educational model where students use AI as a "thinking partner" while maintaining independent reasoning. AI literacy becomes a foundational competency on par with reading and writing, embedded across curricula worldwide, with "metacognition" (awareness of one's own thinking process) becoming a mandatory subject. Singapore's "21st Century Competencies Framework" gets upgraded for the AI era and becomes a global standard, spawning a $50 billion annual "AI literacy education" industry. In this scenario, the Great Unwiring paradoxically becomes the most powerful catalyst for educational innovation — the crisis forcing decades-stagnant education systems to evolve. In the base case, the current chaos settles somewhat, but the cognitive divide between affluent and disadvantaged students, between developed and developing nations, becomes entrenched. As the AI-dependent generation enters the labor market, employers begin treating the "ability to perform work without AI" as a premium skill, creating the paradox where people who use AI less command higher value. The cognitive gap between elites educated AI-free and the mass educated AI-dependent translates directly into income disparity, potentially pushing education-based social mobility to historic lows. The gap between nations also widens — countries like Finland, Estonia, and Singapore that rapidly establish AI education policy maintain "cognitive competitiveness," while slower-responding nations experience "cognitive capital flight." In the bear case, cognitive damage becomes a generational phenomenon. The Great Unwiring generation grows into the primary participants in democracy, and critical thinking deficits ripple into political decision-making — weakened ability to identify fake news, diminished comprehension of complex policy, and uncritical acceptance of AI-generated information become pervasive. As the 2028 U.S. presidential election becomes inundated with AI-generated disinformation and deepfakes, voters with weakened critical thinking may be unable to distinguish truth from fabrication. Worse, a generation that uncritically accepts AI-produced information entering the workforce means "AI hallucinations" could infiltrate corporate and government policy decisions. In this scenario, AI's Great Unwiring is not merely an education problem but a civilizational crisis eroding the very foundations of democracy and civil society. Humanity losing the capacity to think because of a tool it built itself — that is the cruelest irony of the AI age.

Sources / References

- A New Direction for Students in an AI World: Prosper, Prepare, Protect — Brookings Institution

- Students can't reason: Teachers warn AI is fueling a crisis in kids' ability to think — Fortune

- In-Person Classes Aren't Safe From AI Cheating Boom — Inside Higher Ed

- Report: The risks of AI in schools outweigh the benefits — NPR

- OECD Digital Education Outlook 2026 warns of AI learning paradox — DailyAn

- Most teens believe their peers are using AI to cheat in school — Washington Post

- To avoid accusations of AI cheating, college students are turning to AI — NBC News